Building a Modern Data Foundation in the Cloud: Data Lakes and Data Lakehouses as Key Enablers

Abstract

The exponential growth of data and

the increasing complexity of data landscapes have driven organizations to seek

modern, scalable, and agile solutions for data management and analytics.

Cloud-native data lakes and data lakehouses have emerged as key enablers in

building a robust data foundation that can support diverse data types,

workloads, and use cases. This paper explores the architectural patterns,

technologies, and best practices for leveraging data lakes and data lakehouses

in the cloud. It delves into the benefits of cloud-native solutions, such as

scalability, flexibility, and cost-effectiveness, and discusses how

organizations can overcome the challenges associated with big data management

and analytics. The paper also highlights the symbiotic relationship between

data lakes/data lakehouses and DataOps, showcasing how these methodologies can

work in tandem to streamline data pipelines, ensure data quality, and

accelerate time-to-insights. By examining real-world use cases and

implementation strategies, this paper provides valuable guidance for

organizations seeking to build a modern data foundation in the cloud and unlock

the full potential of their data assets.

Keywords: Data Lake, Data Lakehouse, Cloud-Native, Data

Management, Data Analytics, Big Data, Scalability, Flexibility,

Cost-Effectiveness, DataOps, ETL, Metadata, Data Governance, ACID Transactions.,

Data as a Service, Inventory Availability, AWS, Data Migration Service,

Kinesis, Glue, Step Functions, Amazon S3, AWS File Transfer, Real-time Data

Processing, Batch Processing, Data Ingestion, Data Storage, Data Transfer,

Retail, Inventory Management, Cloud Computing, Scalability, Performance

Optimization.

1. Introduction

The modern enterprise operates in a

data-rich environment, where vast amounts of structured, semi-structured, and

unstructured data are generated from various sources. This data deluge presents

both opportunities and challenges. On the one hand, it holds the potential to

unlock valuable insights that can drive innovation, improve decision-making,

and create a competitive advantage. On the other hand, it poses significant

challenges in terms of storage, management, processing, and analysis.

Traditional data management

approaches, often reliant on on-premises data warehouses and complex ETL

processes, are struggling to keep pace with the scale, variety, and velocity of

modern data. These legacy systems are often inflexible, expensive, and time-consuming,

hindering organizations' ability to extract timely and actionable insights from

their data.

Cloud computing has emerged as a

transformative force in the IT landscape, offering scalability, flexibility,

and cost-effectiveness. Cloud-native technologies and services have

revolutionized the way organizations approach data management and analytics,

enabling them to build and manage data platforms that can handle the

complexities of modern data.

Data lakes and data lakehouses,

particularly when implemented in cloud-native environments, have become key

enablers in building a modern data foundation. Data lakes provide a scalable

and cost-effective repository for storing raw data in its native format, while

data lakehouses add a layer of structure and governance, enabling organizations

to perform complex analytics and machine learning directly on the data lake.

This paper aims to explore the

architectural patterns, technologies, and best practices for leveraging data

lakes and data lakehouses in the cloud. It will delve into the benefits of

cloud-native solutions and discuss how organizations can overcome the challenges

associated with big data management and analytics. The paper will also

highlight the symbiotic relationship between data lakes/data lakehouses and

DataOps, showcasing how these methodologies can work in tandem to streamline

data pipelines, ensure data quality, and accelerate time-to-insights. By

examining real-world use cases and implementation strategies, this paper will

provide valuable guidance for organizations seeking to build a modern data

foundation in the cloud and unlock the full potential of their data assets.

2. Literature Review

The evolution of data management

and analytics has been significantly influenced by the advent of cloud

computing and the rise of big data. The limitations of traditional on-premises

data warehouses in handling the scale, variety, and velocity of modern data

have led to the exploration of new architectural patterns and technologies. The

concept of the data lake, as a scalable and cost-effective repository for

storing raw data in its native format, has gained significant traction in

recent years. The flexibility of data lakes in capturing and storing diverse

data types at scale provides a foundation for data exploration and discovery.

However, the lack of structure and governance in data lakes has also been

recognized as a challenge, particularly for complex analytics and reporting.

The emergence of the data lakehouse paradigm addresses this challenge by adding

a layer of structure and metadata management to the data lake, enabling

organizations to perform advanced analytics and machine learning directly on the

data lake. The adoption of cloud-native technologies has further accelerated

the evolution of data lakes and data lakehouses. The scalability, flexibility,

and cost-effectiveness of cloud platforms make them ideal for building and

managing modern data foundations. The availability of managed services,

serverless computing, and other cloud-native features simplifies the deployment

and operation of data lakes and data lakehouses, reducing the operational

overhead and complexity. The symbiotic relationship between data lakes/data

lakehouses and DataOps has also been recognized as a key enabler for successful

data management and analytics initiatives. The principles of DataOps promote

collaboration, automation, and continuous improvement throughout the data lifecycle.

By applying DataOps practices to data lakes and data lakehouses, organizations

can streamline data pipelines, enhance data quality, and accelerate the

delivery of insights.

3. Charactiristics and Benefits

of Data Lake and Data Lakehouse

Data lakes and data lakehouses, as

architectural patterns, offer distinct characteristics and advantages that

contribute to their growing popularity in modern data management. In this

section, we will explore these characteristics and benefits in detail,

highlighting their relevance in cloud-native environments.

I. Data Lakes: Scalability,

Flexibility, and Cost-Efficiency

- Scalability: Data lakes are inherently designed to

handle massive volumes of data, often in the petabyte or exabyte range. In

cloud environments, this scalability is further amplified by the virtually

limitless storage capacity and elastic compute resources offered by cloud

providers. This allows organizations to seamlessly scale their data lakes to

accommodate growing data volumes without the need for upfront capacity planning

or infrastructure investments.

- Flexibility: Data lakes embrace a schema-on-read

approach, allowing data to be ingested in its raw format without the need for

upfront transformation or structuring. This flexibility is particularly

valuable in cloud environments, where organizations often deal with diverse

data sources and formats. It enables them to capture and store data

"as-is," preserving its richness and complexity for future

exploration and analysis.

- Cost-Effectiveness: Cloud-native data lakes leverage low-cost object storage services, such as Amazon S3, Azure Blob Storage, or Google Cloud Storage, making them a cost-effective solution for storing large volumes of data. Additionally, the ability to store data in its raw format eliminates the need for expensive ETL processes, further reducing costs. Cloud providers also offer various pricing models, such as pay-as-you-go and tiered storage, allowing organizations to optimize their storage costs based on their usage patterns.

II. Data Lakehouses:

Structure, Governance, and Performance

- Structure and Governance: While data lakes offer flexibility, they

may lack the structure and governance required for certain use cases,

particularly those involving complex analytics and reporting. Data lakehouses

address this challenge by adding a layer of structure and metadata management

to the data lake, enabling organizations to define schemas, enforce data

quality rules, and track data lineage. This structure and governance facilitate

data discovery, access control, and compliance, ensuring that data is used

responsibly and effectively.

- ACID Transactions: Data lakehouses support ACID transactions,

ensuring data integrity and consistency even in the face of concurrent access

and updates. This is crucial for supporting mission-critical applications and

ensuring data accuracy, especially in cloud environments where multiple users

and services may be accessing and modifying data simultaneously.

- Performance Optimization: Data lakehouses leverage advanced data processing and query optimization techniques to deliver high performance for complex analytics and machine learning workloads. In cloud environments, this performance optimization is further enhanced by the ability to leverage powerful compute resources and distributed processing frameworks, such as Apache Spark, to accelerate data analysis and extract insights quickly.

III. The Synergy of Data

Lakes and Data Lakehouses in the Cloud

In cloud-native environments, data

lakes and data lakehouses can work in synergy to create a powerful and flexible

data platform. Data lakes can serve as the landing zone for raw data, while

data lakehouses can provide the structure, governance, and performance

optimization needed for advanced analytics and reporting. This combination

allows organizations to leverage the best of both worlds, enabling them to

store and analyze diverse data types at scale while ensuring data quality,

consistency, and security.

4. Advantages of Cloud Native

Datalakes and Datalakehouses

The cloud has revolutionized the

way organizations approach data management and analytics. Cloud-native

solutions offer a range of advantages that make them particularly well-suited

for building and managing data lakes and data lakehouses. In this section, we

will explore these advantages in detail, highlighting how they enable

organizations to overcome the challenges of big data and unlock the full

potential of their data assets.

a. Scalability

and Elasticity: The

cloud's ability to scale resources on demand is a game-changer for data lakes

and data lakehouses. Organizations can seamlessly handle massive volumes of

data, often in the petabyte or exabyte range, without worrying about

infrastructure limitations. The elasticity of the cloud allows for dynamic

scaling of compute and storage resources based on workload demands, ensuring

optimal performance and cost-efficiency.

b. Flexibility and Agility: Cloud-native solutions offer a wide array

of services and tools for data ingestion, transformation, analysis, and

visualization. This flexibility empowers organizations to choose the best-fit

technologies for their specific needs and easily adapt their data architecture

as requirements evolve. The cloud's pay-as-you-go model further enhances

agility, allowing organizations to experiment with new technologies and

approaches without significant upfront investments.

c. Cost-Effectiveness: Cloud platforms typically offer

pay-as-you-go pricing models, enabling organizations to pay only for the

resources they consume. This eliminates the need for large capital expenditures

on hardware and infrastructure, making data lakes and data lakehouses more

accessible and affordable. Additionally, the cloud's ability to scale resources

dynamically helps optimize costs by avoiding overprovisioning.

d. Managed Services: Cloud vendors provide a rich ecosystem of

managed services that simplify the deployment and management of data lakes and

data lakehouses. These services, such as data cataloging, metadata management,

and security, reduce the operational overhead and complexity, allowing

organizations to focus on deriving value from their data rather than managing

infrastructure.

e. Serverless

Computing: Serverless

computing, a key feature of cloud-native architectures, allows organizations to

run code without provisioning or managing servers. These further streamlines

operations and enables automatic scaling of data processing workloads based on

demand. Serverless computing can significantly reduce costs and improve

efficiency, especially for workloads with variable or unpredictable usage

patterns

f. High

Availability and Disaster Recovery: Cloud platforms offer built-in high availability and

disaster recovery capabilities, ensuring that data lakes and data lakehouses

remain accessible and operational even in the face of outages or failures. This

resilience is critical for organizations that rely on their data for

mission-critical applications and decision-making.

g. Collaboration and Data Sharing: Cloud-native data lakes and data

lakehouses facilitate collaboration and data sharing across teams and

organizations. By providing a centralized and accessible platform for data

storage and analysis, these solutions enable seamless collaboration between

data engineers, data scientists, business analysts, and other stakeholders.

This fosters a data-driven culture and accelerates the pace of innovation.

5. Layered Architecture of Datalake and Datalakehouse

I. Introduction:

Data lakes and data lakehouses have

emerged as powerful solutions for modern data management. Both offer the

flexibility to store vast amounts of structured, semi-structured, and

unstructured data in its native format. However, to effectively harness the

potential of these architectures, a well-defined layered approach is crucial.

This layered structure organizes data into distinct zones, each serving a

specific purpose in the data lifecycle. The image below provides a visual

representation of the typical layers involved in a data lake or data lakehouse

architecture.

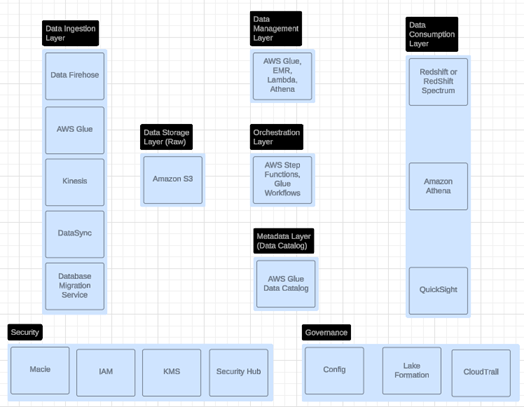

Figure 1: The diagram illustrates

the layered architecture of a data lake or data lakehouse.

I. Data Ingestion Layer:

The data ingestion layer acts as

the gateway for data to enter the data lake or data lakehouse. It captures and

ingests raw data from a wide array of sources, including databases, APIs, IoT

devices, social media feeds, and more. Various data ingestion methods are

employed, such as:

- Batch processing: Periodically

ingests large volumes of data in batches.

- Streaming

ingestion:

Continuously captures and processes real-time data streams.

- Change data

capture (CDC):

Efficiently captures and processes only the changes made to source data.

Data validation and cleansing are

crucial at this stage to ensure data quality and consistency before further

processing. Cloud services like AWS Glue, Azure Data Factory, and Google Cloud

Dataflow provide robust capabilities for data ingestion and transformation.

II. Data Storage Layer (Raw

Data Zone):

The data storage layer, also known

as the raw data zone, serves as the repository for storing raw, unprocessed

data in its original format. This layer prioritizes scalability and

cost-effectiveness, often leveraging cloud object storage services like Amazon

S3, Azure Data Lake Storage Gen2, and Google Cloud Storage. Data security and

access control are paramount at this layer to protect sensitive information.

III. Data Management Layer

(Stage 1, Stage 2, .. Stage N):

The data management layer

transforms and refines raw data into curated datasets suitable for analysis.

This layer involves multiple stages, each performing specific data processing

tasks:

- Data cleansing: Removes errors,

inconsistencies, and duplicates from the data.

- Data

transformation:

Converts data into a structured format and applies business rules.

- Data aggregation: Summarizes and

combines data to derive meaningful insights.

- Data enrichment: Augments data

with additional information from external sources.

Data processing engines like Apache

Spark, Hive, and Presto are commonly used in this layer to execute complex data

transformations efficiently. Cloud services like Amazon EMR, Azure Databricks,

and Google Cloud Dataproc offer managed environments for running these

processing engines.

IV. Metadata Layer (Data

Catalog):

The metadata layer plays a critical

role in data discovery, understanding, and governance. It captures and

organizes metadata, which describes the characteristics and context of data

assets. Data catalogs like AWS Glue Data Catalog, Azure Purview, and Google

Cloud Data Catalog provide a centralized repository for metadata management,

enabling users to easily search, browse, and understand the available data.

V. Data Consumption Layer

(KPI Data, Data as a Service Platform, KPI Dashboard):

The data consumption layer provides

various mechanisms for users to access and consume data for different purposes,

such as analytics, reporting, and machine learning. It supports different data

consumption patterns:

- Interactive

querying:

Enables users to explore and analyze data in real-time using SQL-like queries.

- Batch processing: Processes large

volumes of data in scheduled or on-demand batches.

- Real-time

streaming:

Continuously processes and analyzes streaming data.

Data visualization and BI tools

play a crucial role in this layer, enabling users to create interactive

dashboards and reports to gain insights from the data. Cloud services like

Amazon Athena, Azure Synapse Analytics, and Google BigQuery offer powerful querying

and analytics capabilities.

VI. Orchestration Layer

(Workflow Orchestration Engine):

The orchestration layer manages the

complex data pipelines and workflows that span across the different layers.

Workflow orchestration engines like AWS Step Functions, Azure Data Factory

pipelines, and Google Cloud Composer schedule, coordinate, and monitor the

execution of data processing tasks. They ensure that data flows seamlessly

through the different stages, handling dependencies, error handling, and

retries.

VII. Conclusion:

The layered architecture provides a

structured approach to organizing and managing data in data lakes and data

lakehouses. Each layer plays a specific role in the data lifecycle, from

ingestion to consumption. Cloud-native solutions offer scalability, flexibility,

and cost-effectiveness across all layers, enabling organizations to build

robust and agile data platforms. By adopting this layered approach,

organizations can unlock the full potential of their data and drive data-driven

innovation.

6. Cloud Vendor Offerings for Datalakes and

Datalakehouses

The major cloud providers, Amazon Web

Services (AWS), Microsoft Azure, and Google Cloud Platform (GCP), have

recognized the growing importance of data lakes and data lakehouses and have

invested heavily in developing comprehensive suites of services to support

these architectures. In this section, we will provide a comparative overview of

the key offerings from each vendor, highlighting their strengths and unique

features. We will also discuss other prominent data lakehouse solutions, such

as Snowflake, to provide a broader perspective on the market and available

technologies.

7. Amazon Web Services (AWS)

The below diagram illustrates the

layered architecture of a data lake or data lakehouse on AWS, showcasing the

key services involved in each layer and the interplay between them.

A. Data Lake Offerings

- Data Lake Storage: Amazon S3 - A

highly scalable and durable object storage service that serves as the

foundation for data lakes on AWS. It offers various storage classes to optimize

costs based on data access patterns and provides features like lifecycle

management and versioning for efficient data management.

- Data Ingestion and Integration:

- AWS Glue - A serverless data integration

service that simplifies the discovery, preparation, and movement of data

between various data sources and targets. It can automate many of the ETL tasks

associated with data lakes.

- Amazon Kinesis - A platform for

streaming data on AWS, enabling real-time data ingestion and processing.

- AWS Database

Migration Service (DMS): A service that helps migrate databases to and from AWS,

simplifying the process of consolidating data into a data lake.

- AWS Data Pipeline: A web service

that helps you reliably process and move data between different AWS compute and

storage services, as well as on-premises data sources, at specified intervals.

- Data Cataloging and Metadata Management: AWS Glue Data Catalog - A fully managed metadata repository that

makes it easy to discover, organize, and manage data assets across AWS

services.

- Interactive Query Service: Amazon

Athena - A serverless interactive query service that allows users to analyze

data in Amazon S3 using standard SQL.

- Security and Governance:

- AWS Lake Formation - A fully managed

service that simplifies the setup and management of data lakes, making it

easier to ingest, clean, catalog, and secure data. It provides a centralized

metadata repository and fine-grained access controls to ensure data governance

and compliance.

- Amazon Macie - A fully managed data

security and data privacy service that uses machine learning and pattern

matching to discover and protect sensitive data in AWS.

- AWS IAM - AWS Identity and Access

Management (IAM) enables you to manage access to AWS services and resources

securely.

B. Data Lakehouse Offerings

- Data Lakehouse Solution: Amazon

Redshift Spectrum, AWS Lake Formation

- Key Features:

- Query data directly from S3 without the need to load data into

Redshift.

- ACID transactions ensure data integrity and consistency.

- Schema flexibility allows for schema evolution and adaptation as

data requirements change.

- Performance optimization techniques deliver high performance for complex analytics and machine learning workloads.

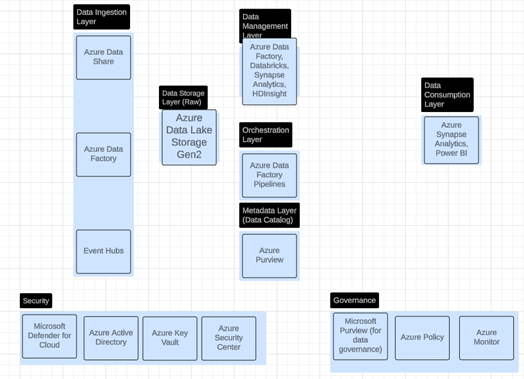

8. Microsoft Azure

The below diagram illustrates the

layered architecture of a data lake or data lakehouse on AWS, showcasing the

key services involved in each layer and the interplay between them.

A. Data Lake Offerings

- Data Lake Storage: Azure Data Lake

Storage Gen2 - A highly scalable and cost-effective data lake storage solution

that combines the capabilities of Azure Blob Storage with a hierarchical

namespace. It offers features like fine-grained access control and data

lifecycle management for efficient data governance.

- Data Ingestion and Integration:

- Azure Data Factory - A fully managed

data integration service that enables organizations to create, schedule, and

manage data pipelines in a visual and code-free environment. It supports a wide

range of data sources and targets, making it easy to ingest and transform data

for data lakes.

- Azure Event Hubs - A fully

managed, real-time data ingestion service that can handle millions of events

per second.

- Azure Data Migration Service: This

service helps migrate databases to Azure, aiding in data consolidation for data

lakes.

- Data Cataloging and Metadata Management: Azure Purview - A unified data governance service that helps you

manage and govern your on-premises, multi-cloud, and SaaS data.

- Interactive Query Service: Azure

Synapse Serverless SQL pools - Enables you to query data directly in your data

lake using SQL, without the need to move or transform data.

- Security and Governance:

- Azure Active Directory - Provides

cloud-based identity and access management services.

- Azure Security Center - A unified infrastructure security

management system that strengthens the security posture of your data centers.

B. Data Lakehouse Offerings

- Data Lakehouse Solution: Azure

Synapse Analytics

- Key Features:

- Unified analytics platform that combines enterprise data warehousing

and big data analytics

- Built-in support for data lakes, enabling organizations to perform

analytics directly on data stored in Azure Data Lake Storage Gen2

- Leverages T-SQL for querying data across the data warehouse and data lake

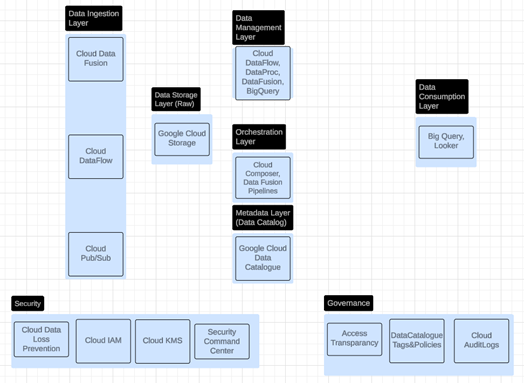

9. Google Cloud Platform (GCP)

The below diagram illustrates the

layered architecture of a data lake or data lakehouse on AWS, showcasing the

key services involved in each layer and the interplay between them.

A. Data Lake Offerings

- Data Lake Storage: Google Cloud

Storage - A highly scalable and durable object storage service that provides a

foundation for data lakes on GCP. It offers various storage classes and

features like lifecycle management and versioning for efficient data

management.

- Data Ingestion and Integration:

- Google Cloud Dataflow - A fully managed, serverless data processing service that enables

stream and batch data processing at scale. It can be used to build and manage

data pipelines for data lakes.

- Pub/Sub

- A fully managed real-time messaging service that

allows you to send and receive messages between independent applications

- Database Migration Service: Google offers services like Cloud Data Fusion and third-party

integrations to facilitate database migration to GCP, supporting data

consolidation into data lakes.

- Data Cataloging and Metadata Management: Google Cloud Data Catalog - A fully managed and scalable metadata

management service that empowers organizations to discover, manage and

understand their data assets.

- Interactive Query Service: BigQuery

- A fully managed, serverless data warehouse that enables scalable and

cost-effective analysis of massive datasets. It can be integrated with data

lakes to provide a unified platform for data storage and analysis.

- Security and Governance:

- Google Cloud IAM - Google Cloud Identity and Access Management

(IAM) lets administrators authorize who can act on specific resources, giving

you full control and visibility to manage Google Cloud resources centrally.

- Cloud Data Loss Prevention - A fully managed service designed to help you discover, classify,

and protect your most sensitive data.

B. Data Lakehouse Offerings

- Data Lakehouse Solution: Big Query,

Dataproc Metastore

- Key Features:

- Serverless data warehouse that enables scalable and cost-effective

analysis of massive datasets.

- Supports querying data directly from Google Cloud Storage using

standard SQL.

- Dataproc Metastore provides a centralized metadata repository for data lakes, enabling data discovery and governance.

10. Other Prominent Data Lakehouse Offerings

While the major cloud providers

offer robust solutions for data lakes and data lakehouses, there are also other

prominent players in the market that provide compelling alternatives. These

solutions often leverage cloud infrastructure and services but may have their

own unique architectures and capabilities.

A. Data Lake Offerings

- Currently, there are no widely recognized standalone data lake offerings from third-party vendors that rival the scale and capabilities of the major cloud providers. Most organizations leverage the object storage and data processing services provided by the cloud platforms to build their data lakes.

B. Data Lakehouse Offerings

- Snowflake: A cloud-built data

warehouse that offers a unique architecture for separating storage and compute,

enabling independent scaling and high performance. It supports a variety of

data workloads, including data warehousing, data lakes, and data science.

- Databricks Delta Lake: An

open-source storage layer that brings ACID transactions and other data

management capabilities to data lakes. It integrates seamlessly with Apache

Spark, making it a popular choice for building data lakehouses on various cloud

platforms.

- ACID

Transactions:

Ensures data integrity and consistency, even with concurrent reads and writes,

making it suitable for production workloads.

- Schema

Enforcement and Evolution: Provides schema validation and enforcement to prevent data

corruption and allows for schema changes without disrupting existing pipelines.

- Time

Travel:

Enables querying past versions of data, facilitating data recovery and

auditing.

- Performance

Optimization:

Leverages data skipping and Z-ordering for faster query performance.

- Dremio: A data lake engine that enables SQL-based querying and analysis of data directly in data lakes. It leverages Apache Arrow for high-performance data access and supports a variety of data sources and formats.

These are just a few examples of

the many data lakehouse solutions available in the market today. The choice of

the right solution will depend on the specific needs and requirements of each

organization. Factors such as data volume, data variety, workload types,

performance requirements, and cost considerations should all be considered when

evaluating different options.

11. Implementation

of Cloud-Native Datalakes and Data Lakehouses

The successful implementation of

data lakes and data lakehouses in the cloud requires careful planning and

consideration of various factors. In this section, we will explore some of the

key considerations that organizations need to address to ensure the effectiveness

and sustainability of their cloud-native data solutions.

- Data Architecture and Design: The foundation of any successful data lake or data lake house implementation lies in a well-defined and scalable architecture. Organizations need to consider factors such as data ingestion patterns, storage requirements, processing needs, and access patterns when designing their architecture. The cloud offers a variety of storage options, compute services, and data processing engines, and organizations need to choose the right combination of these services to meet their specific needs.

- Data Ingestion and Integration: The ability to efficiently ingest and integrate data from diverse sources is critical for data lakes and data lakehouses. Organizations need to consider the volume, velocity, and variety of their data when choosing data ingestion tools and techniques. The cloud offers various options for data ingestion, including batch processing, streaming ingestion, and change data capture (CDC). Additionally, organizations need to ensure seamless integration of data from different sources, both on-premises and in the cloud, to create a unified view of their data assets.

- Data Governance and Security: Data governance and security are paramount in any data management initiative, and cloud-native data lakes and data lakehouses are no exception. Organizations need to establish clear policies and procedures for data access, data quality, data lineage, and data retention. The cloud offers various tools and services for data governance and security, such as access control, encryption, data masking, and auditing. Organizations need to leverage these tools to ensure that their data is protected and used responsibly.

- Metadata Management and Data Cataloging: Metadata management and data cataloging play a crucial role in enabling data discovery, understanding, and governance within data lakes and data lakehouses. Organizations need to implement robust metadata management practices to capture and maintain information about their data assets, such as data schemas, data lineage, and data quality metrics. Cloud-native solutions offer various tools and services for metadata management and data cataloging, making it easier for organizations to organize, discover, and govern their data.

- Performance Optimization and Cost Management: Performance and cost management are critical considerations for cloud-native data lakes and data lakehouses. Organizations need to design their solutions to handle large-scale data processing and analytics efficiently while optimizing costs. The cloud offers various tools and techniques for performance optimization, such as caching, indexing, and query optimization. Additionally, organizations can leverage the cloud's pay-as-you-go pricing model and auto-scaling capabilities to optimize their costs based on their usage patterns.

- DataOps and Automation: DataOps, a methodology that applies DevOps

principles to the data lifecycle, can significantly enhance the efficiency and

agility of data lakes and data lakehouses. By automating data pipelines,

testing, and deployment, DataOps enables organizations to accelerate their data

operations, improve data quality, and reduce the risk of errors. Cloud-native

solutions offer various tools and services for DataOps automation, such as

workflow orchestration, CI/CD pipelines, and monitoring and alerting.

By carefully considering these

implementation aspects and leveraging the capabilities of cloud-native

solutions, organizations can build and manage data lakes and data lakehouses

that are scalable, flexible, cost-effective, and secure. These solutions can

empower organizations to unlock the full potential of their data assets and

drive innovation in today's data-driven world.

12. Case Study: Dataake (House) Solution at

Retail Company on AWS

I. Problem Statement

The leading retail company was

facing challenges with its legacy inventory management system. The primary

issues were:

- Slow Data

Processing:

The full load of inventory availability data from the central inventory

database to the sales and order management system took a considerable amount of

time (5-8 hours), hindering real-time decision-making.

- Delayed Incremental Updates: The incremental or delta load of inventory availability data also suffered from delays, taking up to 15 minutes. This further impacted the company's ability to respond quickly to changes in inventory levels.

These delays in data processing and

updates had a direct impact on the business, leading to potential stockouts,

overstocks, and missed sales opportunities.

II. Solution

The proposed solution leverages a cloud-based Data as a Service (DaaS) platform, specifically focusing on AWS, to create an "Inventory Availability Compute Platform." The key elements of this solution include:

- Data Ingestion: AWS Data

Migration Service (DMS) is used to replicate inventory snapshots, rules,

configurations, and transactional data from the central inventory database

into the DaaS data processing layer.

- Real-time Data

Processing:

The compute platform utilizes Kinesis Data Streams, Data Analytics, and Kinesis

Firehose to capture and process changes in inventory data in near real-time.

- Batch Processing: AWS Glue,

orchestrated with Step Functions, handles batch processing of full and delta

loads of inventory data.

- Data Storage: Amazon S3 buckets

serve as the data store for both raw and processed inventory data.

- Data Transfer: AWS File Transfer

securely transfers the processed inventory availability data to the sales

and order management system.

III. Measurable Outcomes

The primary goal of the solution is

to significantly improve the speed and efficiency of inventory data processing.

The document outlines the following target outcomes:

- Reduced Full Load Processing Time: The full load processing time from inventory availability to the sales and order management system is expected to be reduced to 20-30 minutes, a substantial improvement from the original 5-8 hours.

- Faster Incremental Updates: The incremental/delta load processing time is targeted to be brought down to 2-3 minutes, enabling near real-time inventory updates in the sales and order management system.

- These improvements in data

processing times would translate into tangible business benefits, including:

- Improved Inventory Management: Near

real-time inventory visibility would allow for better decision-making, leading

to optimized inventory levels and reduced stockouts or overstocks.

- Enhanced Customer Experience:

Faster order fulfillment and accurate inventory information would contribute to

a better customer experience, potentially increasing sales and customer

satisfaction.

- Increased Operational Efficiency: Streamlined data processing would enhance overall operational efficiency and agility in responding to market changes.

In essence, the solution aims to

modernize the retail company's inventory management capabilities by leveraging

cloud technologies and real-time data processing, ultimately driving business

growth and customer satisfaction.

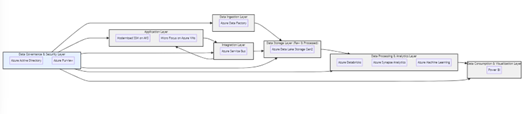

13. Case Study: Datalake (House)

Solution at Leading Beverages Company on Azure

I. Challenge

A leading beverage company relied on a

legacy Java-based web application called Shipment Scheduling & Maintenance

(SSM) for critical logistics operations. However, the system was tightly

coupled with a mainframe backend, utilizing outdated technologies like MQ for

integration and an Oracle database for data storage. This architecture led to

several challenges:

- Performance Bottlenecks and Operational Risk: Complex mainframe interactions and high transaction volumes often

caused performance issues and increased the risk of disruptions.

- Integration Complexities: The

reliance on MQ and FTP for integration with other systems created a brittle and

difficult-to-maintain environment, hindering agility.

- Limited Agility & Scalability: The legacy architecture restricted the company's ability to adapt to changing business needs or leverage modern cloud technologies for scalability.

II. Solution: Building a

Modern Data Foundation on Azure

The company embarked on a journey to modernize its SSM application and migrate it to Azure, focusing on building a modern data foundation to support agile logistics operations. The solution included:

- Data Lake Creation:

- Azure Data Lake Storage Gen2 was implemented to store raw and

processed data from various sources, including the modernized SSM application,

external systems, and potentially IoT devices for real-time shipment tracking.

- This provided a scalable and cost-effective storage layer for all

logistics-related data.

- Data Ingestion & Integration:

- Azure Data Factory was utilized to orchestrate data ingestion from

the modernized SSM application, the mainframe (now on Micro Focus), and other

relevant systems.

- Azure Service Bus replaced the legacy MQ integration, enabling

reliable and scalable communication between different components of the

architecture.

- Data Processing & Transformation:

- Azure Databricks, a powerful Apache Spark-based platform, was

employed to process and transform raw data into actionable insights.

- Azure Synapse Analytics, with its unified analytics capabilities,

was used for complex queries and reporting.

- Data Consumption & Analytics:

- Power BI was integrated to provide interactive dashboards and

visualizations for real-time monitoring and analysis of logistics operations.

- Azure Machine Learning was leveraged to build predictive models for

optimizing shipment scheduling, route planning, and resource allocation.

- Security & Governance:

- Azure Active Directory provided centralized identity and access

management, ensuring data security and compliance.

- Azure Purview was implemented to create a unified data map and

catalog, facilitating data discovery, lineage tracking, and governance.

III. Outcomes

This solution aligns with the

paper's objective of "Building a Modern Data Foundation in the Cloud"

by demonstrating how a data lakehouse architecture on Azure enabled the

beverage company to:

- Improve Performance & Scalability: The migration to Azure and adoption of cloud-native services led to

significant improvements in performance and scalability, allowing the company

to handle growing data volumes and transaction loads.

- Enhance Agility & Innovation:

The modern data foundation empowered the company to respond quickly to market

changes and leverage advanced analytics and machine learning for data-driven

decision-making.

- Reduce Costs & Complexity: The

transition to Azure enabled the company to optimize its infrastructure costs

and streamline its IT landscape.

IV. Specific Measurable Outcomes:

- Reduced Processing Time: The

modernization resulted in faster processing times for shipment scheduling and

maintenance tasks, leading to improved operational efficiency.

- Increased Visibility: Real-time

data access and analytics provided better visibility into logistics operations,

enabling proactive issue resolution and optimized resource allocation.

- Cost Savings: The cloud-based

solution led to cost savings by eliminating the need for on-premises

infrastructure and leveraging the pay-as-you-go model of cloud computing.

V. Conclusion:

This case study demonstrates how a

leading beverage company successfully modernized its legacy logistics

application and built a modern data foundation on Azure. By leveraging the

capabilities of Azure's data lakehouse services, the company achieved significant

improvements in performance, agility, and cost-efficiency, paving the way for a

data-driven future.

14. Role of Dataops in Cloud Native Data Lake and Data Lake

Houses

DataOps, the application of DevOps

principles to the data lifecycle, plays a crucial role in ensuring the

successful implementation and operation of cloud-native data lakes and data

lakehouses. By promoting collaboration, automation, and continuous improvement,

DataOps streamlines data pipelines, enhances data quality, and accelerates the

delivery of insights. In this section, we will explore the key principles of

DataOps and how they can be applied to optimize data lakes and data lakehouses

in the cloud.

- Collaboration and Communication: DataOps fosters a culture of collaboration between data engineers, data scientists, business analysts, and other stakeholders involved in the data lifecycle. This collaboration is essential for breaking down silos, promoting shared ownership of data processes, and ensuring that data solutions meet the needs of the business. In cloud-native environments, where data pipelines and workflows can span multiple services and teams, effective collaboration and communication become even more critical.

- Automation and Orchestration: Automation is a cornerstone of DataOps,

enabling organizations to streamline data pipelines, reduce manual errors, and

accelerate data processing. In cloud-native environments, automation can be

achieved through various tools and services, such as workflow orchestration

platforms, serverless functions, and managed data integration services. By

automating repetitive tasks and orchestrating complex data workflows,

organizations can improve efficiency, reduce costs, and free up valuable time

for data teams to focus on higher-value activities.

- Continuous Integration and Continuous Delivery (CI/CD): CI/CD practices, widely adopted in software development, can also be applied to data pipelines in data lakes and data lakehouses. By implementing version control, automated testing, and continuous deployment, organizations can ensure the quality and reliability of their data pipelines, enabling them to deliver insights faster and with greater confidence. In cloud-native environments, CI/CD pipelines can be easily integrated with cloud services and tools, further streamlining the development and deployment process.

- Monitoring and Observability: Monitoring and observability are essential for maintaining the health and performance of data lakes and data lakehouses. By collecting and analyzing metrics, logs, and traces from various components of the data pipeline, organizations can gain insights into data flows, identify bottlenecks, and proactively address issues. In cloud-native environments, cloud providers offer various monitoring and observability tools that can be integrated with data lakes and data lakehouses, providing real-time visibility into the data lifecycle and enabling proactive troubleshooting and optimization.

- Data Quality and Governance: Data quality and governance are critical for ensuring the accuracy, consistency, and trustworthiness of data in data lakes and data lakehouses. DataOps emphasizes the importance of data quality checks and governance mechanisms throughout the data lifecycle. In cloud-native environments, organizations can leverage cloud-native data quality and governance tools to automate data profiling, validation, cleansing, and lineage tracking, ensuring that data is reliable and compliant with regulatory requirements.

By embracing DataOps principles and

practices, organizations can maximize the value of their cloud-native data

lakes and data lakehouses. DataOps enables them to build and manage data

pipelines that are efficient, reliable, and scalable, ensuring that data is

transformed into actionable insights that drive business value.

15. Challenges, Best Practices

and Future Trends

While data lakes and data

lakehouses offer significant advantages, their implementation and management

come with their own set of challenges. Addressing these challenges and adopting

best practices is essential to ensure the success of your modern data foundation

in the cloud. Additionally, understanding future trends helps organizations

stay ahead of the curve and make informed decisions about their data strategy.

I.Challenges

- Data Governance and Security:

Ensuring proper data governance, access control, and security across a vast and

diverse data landscape can be complex.

- Data Quality and Consistency:

Maintaining data quality and consistency across different data sources and

formats is a persistent challenge.

- Data Discovery and Metadata Management: Efficiently discovering, understanding, and managing metadata

across the data lake or data lakehouse can be challenging.

- Skillset and Expertise: Building

and managing a modern data foundation requires specialized skills in cloud

technologies, data engineering, and data governance.

- Cost Optimization: Balancing storage costs, compute costs, and data access patterns to achieve cost-efficiency can be tricky.

II.Best Practices

- Establish a Robust Data Governance Framework: Define clear policies and procedures for data access, security,

privacy, and compliance.

- Implement Data Quality and Validation Processes: Enforce data quality checks and validation at every stage of the

data lifecycle.

- Leverage Metadata Management and Data Catalogs: Use data catalogs to capture and organize metadata, making data

discoverable and understandable.

- Invest in Training and Skill Development: Ensure your team has the necessary skills and expertise to build

and manage the data foundation.

- Adopt a Cloud-Native Approach:

Utilize cloud-native services and tools to leverage their scalability,

flexibility, and cost-effectiveness.

- Embrace Automation and Orchestration: Automate data pipelines and workflows to reduce manual effort and

improve efficiency.

- Monitor and Optimize Performance:

Continuously monitor and optimize performance to ensure efficient data

processing and query execution.

III.Future Trends

- Increased Adoption of Data Lakehouses: The trend towards combining the flexibility of data lakes with the

structure and performance of data warehouses is expected to accelerate.

- Real-time Data Processing and Analytics: The demand for real-time insights will drive the adoption of

technologies that enable real-time data processing and analytics.

- AI and Machine Learning Integration: Data lakes and data lakehouses will increasingly become the

foundation for AI and machine learning initiatives.

- Data Mesh Architecture: This

emerging approach decentralizes data ownership and management, empowering

domain teams to manage their own data products.

- Serverless Computing and Storage: Serverless technologies will continue to gain popularity due to their scalability and cost-efficiency.

Building a modern data foundation

in the cloud using data lakes and data lakehouses presents both opportunities

and challenges. By addressing these challenges, adhering to best practices, and

embracing future trends, organizations can create a robust and agile data

platform that fuels innovation and drives data-driven decision-making. The

evolution of cloud technologies, coupled with the growing maturity of data lake

and data lakehouse solutions, will undoubtedly shape the future of data

management and analytics.

16. Conclusion

The evolution of data management

and analytics has ushered in an era where cloud-native data lakes and data

lakehouses stand as pivotal pillars in constructing a modern data foundation.

The inherent scalability, flexibility, and cost-effectiveness of cloud

technologies empower organizations to harness the full potential of their data

assets, transcending the limitations of traditional on-premises solutions. The

convergence of data lakes and data lakehouses, coupled with the principles of

DataOps, creates a synergistic ecosystem that fosters agility, collaboration,

and data-driven decision-making.

The cloud's ability to seamlessly

scale resources on-demand ensures that data lakes and data lakehouses can

accommodate the ever-growing volumes of data generated by modern enterprises.

The flexibility of cloud-native solutions empowers organizations to adapt their

data architectures to evolving business needs, while the pay-as-you-go model

optimizes costs and promotes experimentation. Managed services and serverless

computing further streamline operations, allowing organizations to focus on

extracting value from their data rather than managing infrastructure.

The real-world use cases presented

in this paper illustrate the transformative impact of cloud-native data lakes

and data lakehouses across diverse industries. From personalized

recommendations in retail to accelerated drug discovery in healthcare and real-time

risk management in finance, these solutions are enabling organizations to gain

a competitive edge in today's data-driven landscape.

However, the successful

implementation of data lakes and data lakehouses in the cloud requires careful

consideration of various factors, including data architecture, ingestion,

governance, security, metadata management, performance optimization, and DataOps

practices. By addressing these considerations and leveraging the capabilities

of cloud-native solutions, organizations can build a robust and agile data

foundation that empowers them to unlock the full potential of their data

assets.

As the data landscape continues to

evolve, cloud-native data lakes and data lakehouses will play an increasingly

critical role in enabling organizations to extract insights, make informed

decisions, and drive innovation. The future holds immense possibilities, with

advancements in artificial intelligence, machine learning, and real-time

analytics further enhancing the capabilities of these solutions. By embracing

cloud-native technologies and adopting a DataOps mindset, organizations can

position themselves for success in the data-driven future, where data is not

just an asset but a strategic enabler of growth and transformation.

17. Glossary of Terms

- Data Lake: A

centralized repository that allows you to store all your structured and

unstructured data at any scale.

- Data Lakehouse: A new, open data management architecture that combines the flexibility,

cost-efficiency, and scale of data lakes with the data management and ACID

transactions of data warehouses, enabling business intelligence (BI) and

machine learning (ML) on all data.

- Cloud-Native: Applications and

services that are designed and built specifically to run on cloud computing

platforms.

- DataOps: A

collaborative data management practice focused on improving the communication,

integration, and automation of data flows between data managers and data consumers across an organization.

- ETL (Extract, Transform, Load): The

process of extracting data from source systems, transforming it into a suitable

format, and loading it into a target system.

- Big Data: Large and complex

datasets that cannot be easily managed or processed using traditional data

processing tools and techniques.

- Scalability: The ability of a

system to handle increasing amounts of work or data by adding resources.

- Flexibility: The ability of a

system to adapt to changing requirements or conditions.

- Cost-Effectiveness: The ability to

achieve a desired outcome with minimal expenditure of resources.

- Metadata: Data that describes other

data, providing information about its structure, content, and context.

- Data Governance: The overall management of the availability, usability, integrity,

and security of data used in an enterprise.

- ACID Transactions: A set of

properties (Atomicity, Consistency, Isolation, Durability) that guarantee

reliable processing of database transactions.

- RMS: Retail

Merchandising System

- iSAMS: In-store Sales

and Management System

- OSM: Order and Service

Management

- ETL: Extract,

Transform, Load

- AWS: Amazon Web

Services

- DMS: AWS Data

Migration Service

- CDC: Change Data

Capture

- VPC: Virtual Private

Cloud

- DJ: David Jones

- CRG: Country Road

Group

- SKU: Stock Keeping

Unit

- CLI: Command Line

Interface

- NFR: Non-Functional

Requirement

- AD: Active Directory

- HA: High Availability

- AZ: Availability Zone

- COTS: Commercial

off-the-shelf

- OSS: Open-source

software

- EKS: Elastic

Kubernetes Service

- EMR: Elastic Map

Reduce

- PCI: Payment Card

Industry Data Security Standard

- SOC: System and

Organization Controls Compliance standards

18. References

- Armbrust M, Ghodsi A, Zaharia M, et al. Lakehouse:

A New Generation of Open Platforms that Unify Data Warehousing and Advanced

Analytics. Proceedings of the VLDB Endowment, 2020;13: 3231-3244.

- Chen J, Ramakrishnan R. Data Lakes: The Evolution

of Big Data Architectures. Communications of the ACM, 2019;62: 72-82.

- Davenport T H,

Dyché J. Big Data in Big Companies. International Institute for

Analytics, 2013; 1-36.

- Gartner Inc. Data Lakehouse: A Converged Data

Management Solution for Modern Analytics. Gartner Research Reports, 2021.

- Hellerstein JM, Stonebraker M. What Every Data

Scientist Should Know about Data Management. Communications of the ACM, 2019;62:

36-44.

- Miloslavsky A, Van Zanten,M. Data Lakes and Their

Role in Advanced Analytics. Journal of Information Technology, 2018;33:

101-110.

- Nair A,

Sethi V. The Rise of Data Lakehouses: Bridging the Gap Between Data

Lakes and Warehouses. IEEE Cloud Computing, 2020;7: 14-22.

- Ramakrishna M. Building Scalable Data

Architectures in the Cloud: A Case Study on Data Lakes and Lakehouses. Journal

of Cloud Computing, 2022;11: 45-59.

- Schönberger VM, Cukier K. Big Data: A Revolution That Will Transform How We Live, Work, and Think. Houghton Mifflin Harcourt, 2017.

- Stonebraker M, Brodie ML. Data Lake vs. Data

Warehouse: Which Is Right for Your Business? Database Trends and Applications,

2018.