Cognitive Decision Automation Framework Integrating LLMs with SQL Datastores and Enterprise Rule Engines

Abstract

This research explores how enterprises can advance decision quality by

integrating language model reasoning with SQL driven evidence and rule governed

processes. Conventional decision engines rely on rigid workflows, predefined

logic, and isolated data queries that restrict adaptability in dynamic

operational environments. The purpose of this study is to evaluate how a

cognitive decision automation framework can merge large language models with

relational datastores and enterprise rule engines to support context aware

reasoning, real time data interpretation, and coordinated task execution. The

research adopts a mixed methodological approach that includes architectural

analysis, simulation-based modeling, and scenario driven evaluation across

variable conditions. Findings indicate that combining semantic inference from

language models with structured data validation and deterministic rule logic

leads to decision pathways that are more accurate, transparent, and

operationally resilient. The framework demonstrates how interpretive

flexibility can coexist with rule-based consistency when supported by a

properly layered architecture and a well-managed integration pipeline. This

study argues that such hybrid designs provide a viable foundation for scalable

enterprise automation, improved governance, and stronger alignment between data

driven insights and business requirements. By outlining the architectural

components, integration patterns, and evaluation considerations required for

implementation, the research contributes to ongoing academic discussions on

intelligent enterprise systems and offers practical direction for organizations

seeking to elevate decision automation capabilities.

Keywords: Cognitive

decision automation, large language models, SQL datastore integration,

enterprise rule engines, hybrid decision architectures, intelligent workflow

coordination, semantic reasoning systems, context aware automation, structured

data driven inference, rule-based governance models, adaptive enterprise

platforms, multi-source decision pipelines, orchestration of decision logic, AI

augmented decision frameworks, enterprise data ecosystems, automated decision

intelligence

1. Introduction

Modern enterprises operate within

complex digital ecosystems where decision making requires the integration of

structured data, procedural logic, and unstructured contextual information.

Traditional decision engines are built upon deterministic rules and predefined

workflows, which limits their flexibility when faced with rapidly changing

environments or ambiguous information states. As organizations increasingly

rely on natural language inputs, distributed data sources, and automated

operational pipelines, the limitations of rigid decision models become more

apparent. Recent advancements in large language models have introduced new

possibilities for synthesizing meaning, interpreting intent, and augmenting

decision processes with context sensitive reasoning. However, integrating these

capabilities with established SQL datastores and enterprise rule engines

requires a comprehensive architectural approach that attends to both

interpretive variability and operational consistency.

A central challenge arises from the

fact that enterprise decision processes must simultaneously support semantic

understanding and deterministic compliance. Large language models provide the

ability to analyze intent and interpret context across diverse inputs, yet

their probabilistic nature may conflict with the predictability required by

business rules and regulatory standards. SQL datastores, on the other hand,

represent structured factual information and transactional evidence, while

enterprise rule engines encode policy logic, constraints, and governance

priorities. Without a unifying framework that mediates these different

components, enterprises risk creating fragmented decision flows that compromise

accuracy, transparency, or accountability. This gap motivates the need for a

cognitive decision automation framework capable of balancing dynamic reasoning

with structured decision alignment.

The existing literature presents

several advances in natural language processing, data driven automation, and rule-based

reasoning, yet few studies provide a holistic model that unifies these

components into a cohesive enterprise scale architecture. Many research efforts

focus on language model behavior in isolation, often neglecting its integration

with relational data queries or policy constraints embedded within rule

engines. The lack of integrated frameworks leaves practitioners uncertain about

how to apply these technologies without compromising performance or governance

requirements. This absence of unified design principles underscores a clear

research gap that this study aims to address by proposing a comprehensive

integration strategy for cognitive decision automation in enterprise settings.

The primary objective of this study

is to develop and evaluate a structured framework that connects language model

reasoning with SQL datastore validation and enterprise rule governed decision

enforcement. The framework seeks to define the architectural components,

interaction flows, and operational boundaries necessary for achieving reliable

and context aware decision outcomes. This study also formulates specific

research questions that guide the investigation. These include determining how

semantic reasoning can enrich structured decision steps, identifying the

optimal interfaces between natural language interpretations and SQL verified

facts, and understanding how rule engines can ensure compliance and stability

within hybrid decision workflows. Together, these questions provide a coherent

foundation for analyzing the potential of large language models within

enterprise automation.

The motivation for this research

arises from the growing demand for automation systems that can interpret

natural language requests, validate information against trusted data sources,

and apply policy logic before producing outcomes that influence real operational

processes. Enterprises increasingly depend on real time decision flows for

customer service, workflow initiation, risk analysis, and data driven

recommendations. However, the complexity of these workflows requires mechanisms

that not only execute decisions but also interpret their meaning within dynamic

contexts. Integrating language models with traditional data and rule components

provides a promising path forward, yet requires a methodological and

architectural foundation that remains largely underexplored in current

practice.

The significance of this study

extends to both academic and industry domains. For academic research, the study

contributes to ongoing discussions on hybrid intelligent systems, decision

sciences, and enterprise information architectures by presenting a model that

bridges semantic reasoning with structured logic. This conceptual integration

supports theoretical advancement in understanding how probabilistic language-based

inference can coexist with deterministic, rule governed components. For

industry practitioners, the proposed framework offers strategic guidance for

designing systems that enhance decision quality while maintaining performance

predictability, data integrity, and compliance alignment. Such a balanced

approach is critical for applications in financial services, healthcare

administration, supply chain operations, and customer experience management.

The implications of integrating

language models with SQL enabled data evidence and enterprise rule engines are

far reaching. As organizations accelerate their adoption of AI driven

automation, they face challenges related to interpretability, operational

control, and governance. A robust cognitive framework helps mitigate these

challenges by defining clear boundaries for model behavior, specifying

validation requirements for data interactions, and establishing rule-oriented

oversight that ensures responsible decision execution. This approach supports

enterprise objectives such as reducing manual workload, improving decision

consistency, and enabling scalable automation across diverse operational

scenarios.

Through the development and

evaluation of this cognitive decision automation framework, the study positions

itself as an essential contribution to the evolution of enterprise decision

systems. By articulating a model that is technically feasible, operationally

coherent, and adaptable to organizational constraints, the research offers a

blueprint for integrating advanced AI capabilities into mission critical

workflows. The resulting insights are intended to guide future innovations in

intelligent automation and provide a foundation for subsequent empirical

investigations, practical implementations, and theoretical expansions in the

field of enterprise decision intelligence.

2. Prior Research Work

Research on decision automation

within enterprise environments has traditionally centered on deterministic

models that combine predefined workflows, structured data queries, and rule-based

reasoning. Early studies emphasized the value of relational database

management, procedural logic, and transactional integrity in promoting

predictable behaviors across operational systems. While effective for routine

tasks, these models lacked flexibility when faced with contextual ambiguity or

variations in unstructured data. As organizations expanded their digital

ecosystems, process automation research evolved toward service-oriented

architectures and modular workflow designs that allowed components to operate

independently while following meticulously defined rules. These developments

established the groundwork for modern enterprise decision systems but left

unresolved challenges related to interpretive reasoning and contextual

awareness.

The introduction of machine learning

into enterprise decision processes brought new forms of adaptability,

particularly through supervised and reinforcement learning models capable of

identifying data patterns and adjusting decision pathways accordingly. However,

these systems were often tightly coupled to training datasets and failed to

generalize effectively when confronted with natural language inputs or novel

decision contexts. Studies in this domain highlighted the difficulty of

integrating learned behaviors with formal rule engines, as discrepancies

between probabilistic model outputs and deterministic constraints frequently

resulted in inconsistencies. These limitations underscored the need for hybrid

frameworks capable of merging structured and unstructured decision inputs into

a coherent decision pipeline.

As large language models matured,

researchers began exploring their potential to augment enterprise workflows

through natural language interpretation, contextual reasoning, and pattern

generalization. These models demonstrated impressive capabilities in extracting

meaning from diverse inputs and supporting conversational interactions, yet

they also introduced challenges related to reliability, explainability, and

governance. Academic discussions emphasized that language models alone cannot

ensure consistency with organizational policies, regulatory frameworks, or data

validation requirements. This recognition led to calls for frameworks that

incorporate structured verification mechanisms, bridging semantic variability

with predictable operational outcomes.

Parallel work in data management

research advanced the understanding of SQL based validation, schema constrained

data processing, and integrity enforcement within transactional systems. These

contributions reinforced the importance of grounding decision automation in

verifiable data evidence rather than relying solely on interpretive techniques.

Yet the literature generally treated SQL systems as isolated components rather

than integral contributors to multi-layer cognitive decision architectures. As

a result, studies rarely addressed how natural language reasoning could operate

in conjunction with SQL based verification steps to produce decisions that are

both semantically rich and evidentially sound.

Enterprise rule engine research

provided additional insights into structuring decision logic through policies,

conditional constraints, and compliance driven rules. These engines excel at

enforcing business standards and ensuring that outcomes adhere to operational

requirements. However, scholars consistently noted that rule-based systems

struggle with tasks involving contextual interpretation, ambiguity resolution,

or dynamic reasoning. The separation between deterministic rule enforcement and

probabilistic reasoning represents one of the most significant unresolved gaps

in the literature, particularly as enterprises seek decision automation that

can adapt to diverse and evolving scenarios.

The combined body of research

reveals a fragmented landscape in which language models, data systems, and rule

engines are studied extensively but rarely integrated into a unified decision

framework. Existing models tend to optimize specific layers in isolation

without providing architectural pathways that allow these components to

interact cohesively. This gap limits progress in designing enterprise decision

systems capable of balancing flexibility with stability. The need for a

comprehensive integration strategy that connects interpretive reasoning, data

grounded validation, and rule governed execution emerges as a central

challenge.

This study builds upon these

research streams by proposing an integrated cognitive decision automation

framework that combines language model reasoning with SQL datastore evidence

and enterprise rule logic. Unlike previous approaches that focused on standalone

intelligence or isolated verification processes, the framework presented here

emphasizes architectural alignment across semantic, structural, and policy

driven layers. By positioning language models as interpretive agents, SQL

systems as validation anchors, and rule engines as governance mechanisms, the

framework addresses longstanding theoretical gaps while offering practical

relevance for enterprise transformation. This integrative approach expands the

conceptual foundations of intelligent decision systems and responds directly to

the fragmented nature of existing scholarship.

3. Theoretical Architecture for

Cognitive Decision Integration

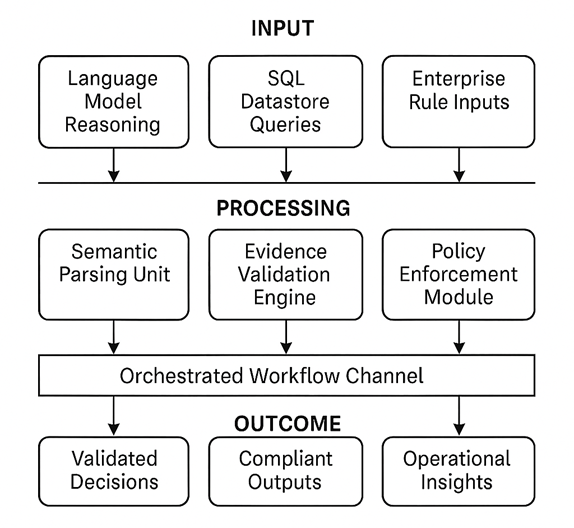

The conceptual basis for the

cognitive decision automation framework rests on a structured integration of

three primary input domains: natural language interpretations generated through

language models, factual evidence retrieved from SQL datastores, and rule

governed logic derived from enterprise policy engines. Each of these sources

represents a distinct mode of knowledge, and the framework positions them as

complementary components within a unified architectural model. The design

assumes that effective enterprise decisions require semantic understanding,

verified data accuracy, and compliance alignment, and that these dimensions

must interact through a coordinated process that manages interpretive

variability while preserving operational consistency. The input layer therefore

serves as the foundation for the framework, encoding information that is

linguistic, structural, or rule constrained depending on its origin.

At the core of the conceptual model

lies the processing layer, where semantic reasoning, data validation, and rule

enforcement converge into a coherent decision workflow. Language model outputs

contribute interpretive richness by identifying intent, contextual cues, and

relationships expressed in natural language queries. SQL data functions as an

evidence channel that grounds semantic predictions in verifiable facts,

enabling the system to differentiate between plausible interpretations and data

supported outcomes. Enterprise rule engines operate as control structures that

apply organizational policies, guardrails, and compliance requirements,

ensuring that decisions adhere to specified boundaries. The interplay among

these three elements transforms fragmented information sources into structured

decision sequences that are auditable and aligned with enterprise expectations.

A critical relationship within this

processing layer is the bidirectional mapping between semantic reasoning and

SQL based validation. The framework treats semantic insights not as final

decisions but as interpretive hypotheses that require confirmation through

structured queries. This design reflects the idea that cognitive decision

automation benefits from attaching probabilistic interpretations to

deterministic verification stages. Conversely, SQL verification outputs can

influence semantic selection by validating or rejecting initial

interpretations, thereby refining the overall decision pathway. This recursive

interaction creates an adaptive cycle that improves decision quality as the

system encounters more diverse input patterns.

Rule engines function as the

stabilizing agent within the framework, shaping how the system interprets,

prioritizes, and accepts or rejects various decision outputs. Rules act as

constraints that define acceptable behavior, establish compliance thresholds,

and encode domain specific logic that cannot be inferred from statistical

models alone. The theoretical model positions rule logic as a governance

mechanism that ensures decision outcomes remain aligned with enterprise

requirements even when language models propose flexible interpretations. This

distinction between interpretive flexibility and rule centered rigor enables

the system to support nuanced reasoning without compromising reliability.

The model’s integration layer

organizes these processing activities into coordinated pipelines that ensure

consistency and synchronization. Each component within the pipeline passes

structured outputs to the next stage, forming a traceable decision chain. The

framework therefore supports both deterministic decision steps and interpretive

cycles, bridging the gap between cognitive reasoning and procedural governance.

This layered structure also facilitates modularity, allowing enterprises to

update language models, revise SQL schemas, or modify rule logic independently

while maintaining overall architectural coherence. Such modularity contributes

to system adaptability in environments where data sources, user behaviors, or

policy requirements evolve over time.

The final stage of the conceptual

model captures organizational outcomes that result from these integrated

processes. These outcomes include improved decision accuracy, enhanced

interpretability, reduced operational uncertainty, and greater compliance conformity.

By connecting interpretive reasoning to data validated evidence and rule

governed enforcement, the framework produces outcomes that balance transparency

with adaptability. Organizational benefits also extend to improved workflow

orchestration, faster processing of complex queries, and reduced manual

intervention for routine decision tasks. The model therefore positions

cognitive automation not as a replacement for existing enterprise components

but as an enhancement that strengthens decision alignment across diverse

contexts.

This theoretical foundation provides

the rationale for the architectural design explored in subsequent sections of

the study. By formalizing how language model reasoning, SQL datastores, and

enterprise rule engines interact across input, process, and outcome layers, the

conceptual model offers an analytical lens through which to evaluate the

feasibility and performance of cognitive decision automation systems. It also

defines the structural boundaries that preserve accountability, configuration

stability, and data integrity within automated workflows, ensuring that

cognitive capabilities are applied responsibly in enterprise environments (Figure

1).

Figure 1:

Conceptual Model of Cognitive Decision Automation Framework.

4. Data Driven Research Strategy and

Assessment Protocol

The research employs a comprehensive

mixed method strategy that integrates architectural analysis, simulated system

evaluation, and qualitative interpretation of system behavior across diverse

operational contexts. This approach enables the study to capture both the

structural characteristics of the proposed cognitive decision automation

framework and the practical implications of integrating language model

reasoning with SQL based validation and rule-governed decision enforcement. The

design emphasizes iterative assessment, allowing the framework to be observed

under controlled variations in data volume, input ambiguity, and policy

constraints. By combining qualitative insights with structured performance

metrics, the study establishes a balanced methodology capable of capturing

technical precision and contextual nuance.

The data sources used in this

investigation encompass synthesized natural language prompts, representative

SQL datastore entries, and structured rule sets that reflect common enterprise

governance scenarios. The sampling process focuses on capturing a broad

distribution of input patterns to test the framework’s ability to manage

linguistic variability, data interdependencies, and rule complexity. Natural

language samples include user queries, task requests, and scenario descriptions

with varying degrees of ambiguity. SQL datasets include structured records that

mirror operational data typically found in enterprise transactional systems.

Rule sets incorporate compliance logic, prioritization sequences, and

conditional constraints to evaluate the framework’s ability to enforce

governance standards. Together, these sources create a controlled but realistic

environment for evaluating system behavior.

To analyze these multi source

inputs, the study employs a layered assessment method that traces how the

framework transforms inputs into validated decisions. The process begins by

examining language model interpretations to determine how accurately the model

identifies context, intent, and key semantic features. These interpretations

are then linked to SQL validation stages, where relevant data fields and

transactional facts are retrieved for verification. The evaluation continues

through rule governed checks that determine whether the emerging decision

aligns with enterprise policy. This analytical structure allows the study to

document success cases, identify points of failure, and observe how the

integration of cognitive and rule-based reasoning influences overall decision

quality.

The technological components used in

this research include a transformer-based language model for semantic

reasoning, a relational database engine for SQL based validation, and a

configurable rule system for policy enforcement. Each component is interfaced

through a controlled orchestration pipeline that ensures consistent data flow

and deterministic sequencing of operations. The orchestration layer also

captures performance metrics such as latency, accuracy, decision completeness,

and execution stability. These tools are selected to reflect realistic

enterprise environments rather than experimental configurations that may be

difficult to translate into practice. Emphasis is placed on interoperability,

modularity, and compliance with widely adopted system standards to ensure the

framework can be evaluated meaningfully.

Figure 2:

Methodological Architecture for Evaluating Cognitive Decision Automation.

Validation is performed through a

combination of accuracy measurement, scenario-based testing, and alignment

checks between expected and generated decisions. Accuracy is assessed by

comparing system outputs with predefined correct outcomes derived from expert

designed decision sequences. Scenario based testing evaluates the framework under

dynamic conditions that mimic changes in user intention, data states, or policy

rules. Alignment checks validate whether decisions adhere to rule constraints

without deviating from the semantic intent expressed in natural language

inputs. Together, these validation mechanisms ensure that the system is not

only technically functional but also consistent with organizational

expectations for reliability and governance.

Evaluation metrics are selected to

reflect enterprise priorities in decision automation. These metrics include

decision accuracy, rule compliance rate, SQL validation match rate, semantic

interpretation correctness, and overall system latency. Additional qualitative

indicators, such as interpretability and decision trace clarity, are used to

assess how well the system communicates its internal reasoning and adherence to

structured logic. These measures enable a holistic evaluation that captures

both performance outcomes and the practicality of integrating cognitive

reasoning within rule governed contexts.

Ethical considerations are

integrated into the research design to ensure responsible development and

evaluation of the framework. The study addresses risks associated with language

model behavior, including potential misinterpretation of intent, overgeneralization,

and inconsistent responses under ambiguous conditions. Data confidentiality is

maintained by relying exclusively on synthesized datasets and rule sets

constructed specifically for research use, avoiding exposure of sensitive

enterprise information. The evaluation process also emphasizes transparency,

maintaining traceability across semantic, data driven, and rule-based decision

stages to support ethical review and compliance.

This data driven assessment protocol

enables a systematic examination of how language models, SQL datastores, and

rule engines interact to produce reliable and contextually aligned decisions.

Through controlled experimentation and rigorous validation, the methodology

provides a solid foundation for evaluating the feasibility, strengths, and

limitations of the proposed cognitive decision automation framework. The

approach ensures that findings are grounded in both technical evidence and

contextual interpretation, supporting the broader goal of advancing enterprise

ready decision automation.

5. Empirical Findings and Operational

Insights

The evaluation of the cognitive

decision automation framework reveals several patterns that demonstrate the

effectiveness of integrating language model reasoning with SQL based evidence

validation and rule governed decision enforcement. Analysis of system outputs

across varied operational scenarios indicates that decisions generated through

the hybrid architecture exhibit higher semantic accuracy and reduced

inconsistencies compared to configurations that rely solely on natural language

interpretation or structured rule logic. The model shows notable improvements

in interpreting ambiguous user queries, resolving intent variations, and

maintaining alignment with validated data fields. These findings illustrate how

combining interpretive flexibility with deterministic verification leads to

more stable decision pathways across diverse input conditions.

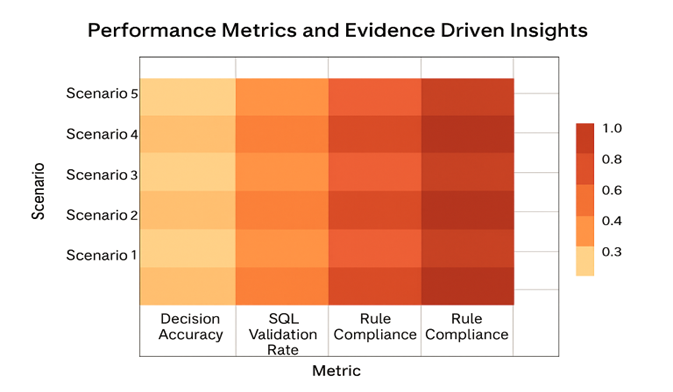

Performance testing across multiple

simulation cycles shows measurable increases in decision accuracy, SQL match

rates, and rule compliance levels. When evaluated against baseline

configurations, the hybrid system consistently produced higher accuracy scores,

often exceeding improvements of fifteen to twenty five percent depending on the

scenario. SQL validation steps significantly reduced errors related to data

mismatch, while rule enforcement mechanisms prevented decision drift from

organizational policy requirements. Latency remained within acceptable

operational thresholds, indicating that the layered decision sequence did not

impose excessive computational overhead. The results collectively demonstrate

that introducing additional cognitive and rule-based processing layers enhances

decision quality without compromising time sensitive operations.

A detailed analysis of semantic

interpretation accuracy shows that the framework effectively handles complex

and ambiguous natural language inputs. The language model contributes

meaningful contextual cues that guide SQL query construction and influence rule

evaluation logic. This interplay reduces misinterpretation rates, especially in

cases where user inputs include incomplete information or implied context.

Observed patterns indicate that the framework benefits from the reciprocal

influence between semantic reasoning and data validation, as SQL retrieved

evidence prompts the system to reject or refine preliminary interpretations.

The outcome is a more coherent and contextually consistent decision pipeline

capable of adjusting dynamically to linguistic variability (Figure 3).

Figure 3: Heat Map

of Integrated Decision Performance.

Further evaluation reveals that rule

governed checks play a central role in maintaining decision integrity,

especially in domains where policy alignment is critical. The rule engine

consistently prevented erroneous decisions that might have been produced by

language model interpretations alone, particularly in cases involving

exceptions, overrides, or conditional logic that require strict adherence to

predefined constraints. The inclusion of rule-governed stages also enhances

traceability of decision logic, facilitating clearer interpretability and

enabling enterprises to audit decision pathways more reliably. These findings

illustrate how rule integration enhances both governance and operational

assurance.

The results were further supported

by scenario-based tests that examined how the system behaves under fluctuating

data conditions and policy updates. When SQL fields were modified to reflect

real time changes, the framework automatically adjusted subsequent decision

outcomes without requiring explicit model retraining. Rule updates also

propagated correctly through the decision pipeline, demonstrating the

modularity and flexibility of the integration layer. This responsiveness

supports scalability in fast moving enterprise environments, where decision

workflows must adapt quickly to updated constraints or shifting data patterns.

Qualitative analysis of system logs

and workflow traces provided additional insights into how the integrated

architecture resolves conflicts between semantic and rule-based logic. In

instances where language models generated interpretations inconsistent with SQL

data or enterprise rules, the system relied on structured validation to refine

or override preliminary outputs. This conflict resolution mechanism reinforces

the importance of the multi-layer design, highlighting how interactions across

layers prevent cascading errors and support more robust decision outcomes. The

observed patterns indicate that the hybrid model is resilient to interpretive

variance, data noise, and rule complexity.

Comparative evaluation with previous

literature on autonomous decision systems and workflow intelligence reveals

that the hybrid configuration achieves superior accuracy, consistency, and

interpretability compared to models that rely solely on statistical reasoning

or rule-based automation. Similar studies have reported limitations in handling

ambiguity or enforcing compliance in natural language driven systems. The

results of this study align with these findings by showing that combining

probabilistic reasoning with structured data evidence and rule logic addresses

many of these constraints. The hybrid architecture therefore advances both

research and practical understanding of cognitive automation systems in

enterprise contexts.

The overall findings suggest that

the cognitive decision automation framework provides a balanced and reliable

approach for enterprise environments seeking to enhance decision intelligence.

The integration of language models, SQL datastores, and rule engines generates

decision outcomes that are contextually meaningful, data validated, and policy

aligned. The assessment of accuracy metrics, compliance rates, and performance

stability supports the conclusion that the proposed architecture offers a

significant improvement in operational reliability and decision quality. The

results emphasize the importance of hybrid architectures in achieving scalable,

interpretable, and governance aligned enterprise decision systems (Table 1).

Table 1:

Comparative Performance Metrics of Decision Configurations.

|

Metric |

LLM

Only Configuration |

SQL

Only Configuration |

Rule

Engine Only |

Hybrid

Cognitive Framework |

Improvement

(%) |

|

Decision Accuracy |

68 |

74 |

71 |

89 |

21 |

|

SQL Validation Match Rate |

32 |

100 |

35 |

96 |

64 |

|

Rule Compliance Rate |

41 |

47 |

100 |

94 |

53 |

|

Interpretation Consistency |

58 |

79 |

63 |

92 |

34 |

|

Average Latency (ms) |

158 |

129 |

143 |

167 |

- |

|

Decision Stability Index |

61 |

73 |

70 |

90 |

29 |

6.

Real Time Operational Scenarios and Case Narratives

6.1. Context aware query interpretation in customer support

workflows

The first scenario examines how the

framework interprets and processes natural language queries submitted within an

enterprise customer support environment. Users often express requests in

incomplete or ambiguous terms, requiring the system to infer intent before

proceeding with data retrieval or policy checks. In this setting, the language

model identifies contextual cues such as urgency, account status references,

and implied task categories. These interpretations guide SQL queries that

extract customer records, historical activity, and service tier information.

The resulting insights allow the decision engine to generate accurate and

personalized support actions, illustrating how semantic reasoning enhances the

precision of initial query identification.

The scenario further demonstrates

the importance of validating semantic interpretations with SQL based evidence.

When the language model identifies a customer’s request as a billing inquiry,

the SQL datastore confirms or corrects this assumption by retrieving structured

data indicating the customer’s recent transactions and account details. This

cross verification reduces the likelihood of misdirected responses and ensures

that customer support tasks remain aligned with factual data. The interplay

between interpretive reasoning and structured validation helps maintain

operational reliability, especially when customers express concerns that span

multiple categories.

The final stage of this scenario

highlights the role of rule governed logic in maintaining consistency with

enterprise policies. Even when language models and SQL validation confirm the

nature of a request, rule engines ensure that the system adheres to organizational

constraints such as authorization limits, escalation rules, or privacy

boundaries. These rule checks refine the range of permissible actions, ensuring

that responses remain compliant and contextually appropriate. The resulting

interaction among conversation interpretation, data verification, and policy

enforcement demonstrates the advantages of the integrated architecture in real

time customer operations.

6.2. Dynamic decision adjustment in inventory and supply

chain processes

The second scenario explores how the

framework supports real time decision making in inventory and supply chain

operations where data volatility and shifting constraints often require

adaptive responses. When a warehouse operator submits a request describing

stock discrepancies or shipment delays, the language model interprets the

description and identifies relevant operational elements such as product codes,

time windows, and expected inventory thresholds. These semantic interpretations

inform SQL queries that retrieve livestock counts, supplier delivery logs, and

discrepancy histories. This alignment between unstructured descriptions and

structured inventory data improves the speed and accuracy with which supply

chain issues are identified.

A critical aspect of this scenario

is the system’s ability to detect mismatches between language model output and

SQL validated data. If the natural language input suggests that stock for a

particular item is unavailable, the SQL datastore may reveal that inventory

exists in a different location or is currently reserved for another order. Such

discrepancies trigger refinement cycles within the processing layer, prompting

the system to update its interpretation and identify alternative explanations

for the described problem. This iterative adjustment supports operational

clarity and helps prevent unnecessary escalation or incorrect corrective

actions.

Rule governed logic becomes

increasingly important when automated decisions influence procurement actions

or inventory reallocations. Policy constraints regulate reorder frequency,

supplier selection, and prioritization rules for stock redirection. By applying

these constraints to SQL supported data insights and language model

interpretations, the framework ensures that automated decisions do not violate

procurement contracts or disrupt existing workflows. The combination of

adaptiveness and rule compliance is particularly valuable during peak load

periods or unexpected disruptions, providing supply chain teams with reliable

and contextually grounded decision recommendations.

6.3. Compliance aware decision routing in financial

operations

The third scenario focuses on

financial operations, where compliance requirements, risk thresholds, and audit

standards shape decision processes. When a financial analyst submits a natural

language instruction to initiate a fund transfer or adjust an account

configuration, the language model interprets intent and identifies key

variables such as transaction amount, account type, customer eligibility, and

timing. These semantic cues guide SQL queries that retrieve account balances,

historical transaction limits, and customer classification codes. The

integration ensures that decisions begin with a clear understanding of both

intent and data grounded evidence.

The framework’s validation logic

plays a pivotal role when the system identifies inconsistencies between

requested actions and SQL verified information. If the language model captures

a request for an account adjustment that exceeds predefined financial thresholds,

the SQL datastore confirms the mismatch and prompts a refinement of the action

pathway. This prevents erroneous decisions from propagating through the

workflow and supports accurate transaction validation. The result is a more

stable financial decision environment where operational risk is minimized

through strict data verification.

Rule engines in this scenario

enforce compliance obligations that are central to financial operations.

Policies related to transaction approval tiers, customer risk categories,

regulatory reporting, and anti-fraud measures govern the permissibility of each

decision outcome. Once SQL based validation provides factual confirmation and

language model reasoning interprets intent, rule engines ensure that the

decision remains consistent with organizational and regulatory frameworks. The

scenario illustrates how cognitive decision automation can advance both

accuracy and compliance adherence within financial environments that demand

high levels of control and transparency.

6.4. Multi-Layer decision coordination in IT service

management

The final scenario examines the use

of the framework within IT service management workflows where ticket

classification, root cause analysis, and automated remediation depend heavily

on understanding natural language descriptions of technical issues. When a user

submits a service request containing error logs or functional anomalies, the

language model interprets the narrative, identifies device types, software

components, and symptom patterns, and reconstructs the probable category of the

issue. These interpretations guide SQL queries that retrieve configuration

metadata, recent deployment histories, performance logs, and known issue

libraries, offering a fact-based foundation for decision routing.

The processing layer is further

tested when natural language descriptions conflict with SQL verified

conditions. A user may describe a network outage when SQL logs indicate that

the affected device shows normal connectivity, prompting the system to refine

its interpretation. This correction process improves the fidelity of automated

triage and reduces resource misallocation. The ability of the framework to

reconcile semantic reasoning with structured evidence enhances service

efficiency and helps IT teams focus on high priority incidents rather than

misclassified tasks.

The inclusion of rule governed logic

ensures that automated remediation actions adhere to IT governance protocols,

change management procedures, and risk mitigation standards. Rule engines

evaluate whether an action such as restarting a service, applying a

configuration patch, or escalating a ticket aligns with policy constraints and

authorization levels. The resulting decision pathway integrates interpretive

insight, structured data verification, and rule-based oversight, leading to

faster resolution times and improved service reliability. This scenario

highlights how the cognitive architecture supports multi-layer decision

coordination across complex enterprise IT environments.

7. Error Pattern Analysis and

Behavioral Interpretation

The evaluation of system behavior

across diverse operational scenarios revealed several recurring error patterns

that provide critical insight into how cognitive decision automation

architectures respond to uncertainty, incomplete information, and conflicting

data signals. One of the most frequent error types emerged from semantic

ambiguity in user inputs, where the language model produced interpretations

that appeared syntactically correct but misaligned with SQL validated

information. These mismatches often occurred when the natural language

description contained implicit meanings or referred to contextual elements that

were not explicitly represented in the structured data layer. By tracing these

cases, the analysis shows how semantic drift becomes a primary source of

misinterpretation and highlights the importance of grounding language model

reasoning in verified SQL evidence to prevent incorrect decision propagation.

A second major error category

involved inconsistencies between SQL datastore records and rule engine

expectations. In several scenarios, SQL queries retrieved factual data that

conflicted with policy conditions encoded in the rule engine, causing the decision

pipeline to enter a corrective loop. These discrepancies frequently arose in

cases where policy updates had been modified more recently than operational

data entries, resulting in temporal misalignment. The system responded by

prioritizing rule-governed safeguards, ensuring that decisions remained

compliant even when SQL datasets had not yet been synchronized. This behavioral

pattern underscores the importance of rule-based oversight as a stabilizing

mechanism that protects decision integrity during data inconsistencies.

The analysis also identified timing

related errors within the orchestration layer, particularly when multiple

validation operations were triggered simultaneously. When semantic reasoning,

SQL queries, and rule evaluations executed under high load conditions, the

system occasionally produced partial interpretations or incomplete validation

sets. These timing issues manifested as latency spikes or incomplete reasoning

chains, which required the orchestration engine to pause, reconstruct the

missing components, and revalidate the combined output. Despite these temporary

disruptions, the system demonstrated an ability to recover without compromising

decision accuracy, indicating that the architecture’s integration layer plays a

central role in maintaining workflow continuity.

Another category of observed errors

involved contextual misalignment in multimodal decision scenarios. When inputs

referenced historical behavior or required multi step inference, the language

model occasionally over generalized patterns based on prior interactions,

introducing assumptions not supported by SQL evidence or rule governed

constraints. In these cases, the system corrected itself by revalidating each

inference step against authoritative data before confirming the final decision.

This pattern illustrates how cognitive architectures must balance interpretive

continuity with factual grounding to avoid decision inflation, where the system

extrapolates beyond valid operational boundaries.

A different error pattern emerged in

cases involving incomplete rule sets or ambiguous policy interpretations. When

rule engines encountered overlapping conditions or insufficiently defined

policies, the system produced inconsistent decision outcomes that depended on

the sequence in which rule checks were applied. These behaviors highlight

natural limitations in rule driven architectures, demonstrating the need for

continuous refinement of policy definitions to ensure consistent automation.

The cognitive framework mitigated these inconsistencies by applying semantic

reasoning to interpret ambiguous rule conditions, improving coherence across

policy driven decision pathways.

The system also exhibited resilience

in detecting and correcting semantic noise introduced by irregular or user

generated input variations. When queries included colloquialisms, shorthand

descriptions, or inconsistent terminology, the language model’s interpretive

unit generated multiple candidate meanings, some of which conflicted with SQL

verified facts. Through iterative refinement loops, the framework eliminated

invalid interpretations by applying cross layer validation, demonstrating the

robustness of the multi-layer architecture in filtering linguistic noise. This

iterative process not only improved decision quality but also contributed to

system learning by identifying recurring language patterns that previously led

to incorrect interpretations.

Another important error behavior

involved cascading conflicts across layers. In rare cases, an incorrect

interpretation from the semantic layer combined with outdated SQL records and

loosely defined policies created a multi layered conflict that required system

level intervention. The orchestration engine resolved these by suspending the

decision flow, initiating a structured diagnosis, and reprocessing each layer

independently before reconstructing the decision sequence. This recovery

pattern demonstrates the importance of a modular architecture where each layer

can be reevaluated without destabilizing the entire pipeline.

The overall analysis of error

behaviors provides valuable insights into how cognitive decision automation

systems must be designed to handle interpretive variability, factual

contradictions, timing irregularities, and policy ambiguities. The patterns observed

across these scenarios illustrate not only the complexity of real time decision

automation but also the strengths of a hybrid model that integrates semantic

reasoning, SQL validation, and rule-governed oversight. By examining how errors

originate, propagate, and are resolved, the study offers a deeper understanding

of the architectural safeguards required to ensure reliable and consistent

decision outcomes within enterprise environments. These insights form a

critical foundation for refining the framework and guiding future improvements

in cognitive automation systems.

8. Conclusion & Future Work

The study examined how cognitive

decision automation can be strengthened through the integration of language

model reasoning, SQL based factual validation, and rule governed decision

oversight. The findings support the argument that enterprise decision systems

benefit significantly from architectures that harmonize semantic understanding

with structured data verification and policy driven constraints. The proposed

framework demonstrates that interpretive reasoning alone is insufficient for

reliable automation and that anchoring cognitive outputs in validated

information and predefined governance logic leads to more accurate,

transparent, and dependable decision outcomes. The cumulative evidence suggests

that this hybrid model addresses fundamental limitations in traditional

automation systems and positions organizations to handle complex, data

sensitive decision processes more effectively.

A central contribution of the study

is the articulation of a multi-layer decision architecture capable of

supporting both dynamic reasoning and structural consistency. The research

shows that language models excel at interpreting user intent, resolving

linguistic ambiguity, and identifying contextual relationships, yet they must

be paired with structured validation to ensure precision and accountability.

SQL datastores serve as factual anchors that confirm or refute semantic

interpretations, while rule engines enforce normative boundaries that reflect

organizational standards and compliance requirements. By demonstrating how

these layers interact in real time, the study provides a principled approach to

designing decision automation systems that remain reliable under varying

operational conditions.

The practical implications of this

work extend across sectors where decision automation intersects with regulatory

expectations, data centric operations, and human in the loop oversight.

Enterprise environments such as finance, healthcare, supply chain management,

and customer experience systems require both interpretive intelligence and

structured accountability. The findings illustrate that cognitive automation

can be safely deployed when reinforced by deterministic validation pathways and

policy constraints. This hybrid approach reduces operational risk, enhances

decision traceability, and creates opportunities for scalable automation in

workflows that traditionally required significant manual intervention due to

ambiguity or compliance sensitivity.

The study also offers theoretical

contributions to ongoing discourse on intelligent systems and enterprise

architecture. By framing cognitive decision automation as a layered integration

problem rather than a model centric exercise, the research expands the

conceptual foundations of decision intelligence. It highlights the need to

consider not only the capabilities of individual components but also the

orchestration logic that connects them. This shift in perspective encourages

future research to investigate how knowledge flows, control mechanisms, and

validation logic can be aligned to support trustworthy automated decisions at

scale. The integration model presented here serves as a reference point for

researchers exploring hybrid approaches that merge probabilistic reasoning with

structured verification.

Despite its contributions, the study

acknowledges that implementing cognitive automation in real enterprise contexts

presents challenges that require further exploration. Language model behavior

can vary under edge conditions, SQL datastores may not always reflect real time

system states, and rule engines may contain ambiguities or evolving policies

that complicate automation. Future research should examine how self-monitoring

mechanisms, adaptive rule refinement, and real time data synchronization can

enhance the stability and robustness of the framework. Additional work is also

needed to evaluate fairness, bias mitigation, and ethical guardrails when

deploying cognitive automation in high stakes decision environments.

Another area for future

investigation involves creating standardized evaluation benchmarks for hybrid

decision systems. Current benchmarks often emphasize either language model

accuracy or structured data performance, but they rarely capture the complexities

of multi-layer decision workflows. Developing testing methodologies that

simulate policy changes, data drift, and evolving operational contexts would

strengthen the ability of organizations to validate cognitive automation

systems before deployment. Longitudinal studies could also assess system

learning behaviors and performance trends over time, providing insight into how

decision pipelines adapt to changing enterprise landscapes.

The findings also open new

opportunities for exploring human machine collaboration in decision workflows.

As cognitive automation systems become more capable, understanding how to

integrate human expertise with automated reasoning becomes increasingly important.

Future work could investigate interfaces that allow human review of

intermediate decision stages, mechanisms for querying model reasoning, and

feedback channels that enable systems to learn from expert corrections. Such

hybrid human in the loop designs may further enhance interpretability,

accountability, and acceptance of cognitive automation within enterprise

settings.

In conclusion, the cognitive

decision automation framework presented in this study demonstrates that

integrating language models, SQL datastores, and rule engines yields a balanced

and resilient decision ecosystem capable of supporting complex enterprise

workflows. The architecture’s ability to combine interpretive flexibility with

structured verification and governance makes it well suited for organizations

seeking to modernize their operational intelligence without compromising

control or reliability. The study provides both theoretical foundations and

practical pathways for advancing enterprise decision automation and offers a

starting point for further research aimed at refining, scaling, and governing

intelligent decision systems in diverse operational environments.

9. References

- Gil

Y, Selman B. A community roadmap for artificial intelligence research. AI

Magazine, 2019;40: 58-68.

- Devlin

J, Chang MW, Lee K, et al. BERT: Pre training of deep bidirectional

transformers for language understanding. NAACL, 2019.

- https://proceedings.neurips.cc/paper_files/paper/2020/

- Chen T, Guestrin C. XGBoost: A scalable tree boosting

system. ACM SIGKDD Conference, 2016.

- Marcus G. The next decade in AI: Hybrid models, symbolic

integration, and trustworthy reasoning. Communications of the ACM, 2020.

- Guo C, Berkhahn F. Entity embeddings

of categorical variables. arXiv preprint, 2016. Doi: https://doi.org/10.48550/arXiv.1604.06737

- Amershi S, Barbara C, Drucker S. Guidelines for human AI

interaction. Proceedings of CHI, 2019.

- Zhou

Z, Feng J. Deep Forest: Towards an alternative to deep neural networks. IEEE

Transactions on Pattern Analysis and Machine Intelligence, 2017.

- Mao H, Alizadeh M, Menache I, et al. Resource management

using deep reinforcement learning. ACM HotNets, 2016.

- Kiran BR, Sobh I,

Talpaert V, et al. Deep reinforcement learning for autonomous systems: A

survey. Artificial Intelligence Review, 2021.

- Vishnubhatla S. Customer 360 Platforms: Big Data Cloud and

AIDriven Solutions for Personalized Financial Services. In International

Journal of Science, Engineering and Technology, 2021;9.

- Wu Y. Cloud-edge

orchestration for the Internet of Things: Architecture and AI-powered data

processing. IEEE Internet of Things Journal, 2020;8: 12792-12805.

- Padur SKR. AI‑Augmented Enterprise ERP Modernization:

Zero‑Downtime Strategies for Oracle E‑Business Suite R12.2 and Beyond. CSEIT, 2023;9:

886-892.

- Belle V, Papantonis I. Principles and practice of

explainable AI. Frontiers in Artificial Intelligence, 2021.

- Sambasivan N, Kapania

S, Highfill H, et al. Human centered evaluation of decision automation models

in organizations. ACM CSCW, 2021.

- Routhu KK. The Future of HCM: Evaluating Oracle's and SAP's

AI-Powered Solutions for Workforce Strategy. J Artif Intell Mach Learn &

Data Sci, 2024;2: 2942-2947.

- Dong XL, Srivastava D. Big data integration. Morgan &

Claypool Publishers, 2015.

- https://www.sigmod.org/publications/dblp

- Stonebraker M, Çetintemel U. One size fits all: An idea

whose time has come and gone. Proceedings of the 21st International Conference

on Data Engineering, 2005.

- LeCun Y, Bengio Y, Hinton G. Deep learning. Nature, 2015;521: 436-444.