Explainable AI Frameworks for Patient-Level Claims Data Analytics

Abstract

Artificial intelligence-driven

analytics applied to patient‑level healthcare claims data have become central

to pharmaceutical decision‑making, enabling prediction of treatment adherence,

therapy switching and longitudinal patient behavior. However, the increasing

reliance on complex machine learning models has introduced significant concerns

related to transparency, interpretability and trust-particularly in regulated

environments where decisions influence patient access, real‑world evidence

generation and strategic planning.

This study

proposes a comprehensive explainable

artificial intelligence (XAI) framework tailored for patient‑level

claims data analytics in pharmaceutical contexts. The framework integrates high‑performance

predictive modeling with post‑hoc interpretability techniques, including

feature attribution and local explanation methods, to ensure model outputs

remain transparent, clinically meaningful and decision‑relevant. In addition, a

dedicated governance and auditability layer is embedded to support regulatory

compliance, traceability and responsible AI deployment across the analytics

lifecycle.

By structuring

explainability as a first‑class system capability-rather than an afterthought-the

proposed framework enables stakeholders to understand not only what predictions are generated, but why they are produced and how they can be acted upon. This work

contributes to the growing body of trustworthy AI research by offering a

scalable, enterprise‑ready approach that balances predictive performance with

interpretability, supporting real‑world evidence development and patient‑centric

pharmaceutical analytics.

Keywords: Explainable AI, Healthcare Claims

Data, Patient Behavior Analytics, Model Interpretability, Pharmaceutical

Analytics, Treatment Adherence, Real World Evidence

1.

Introduction

Healthcare claims

data have emerged as one of the most valuable sources of real‑world data for

understanding patient‑level healthcare utilization, treatment pathways and cost

dynamics across large populations. Generated through insurance billing and

administrative processes, claims datasets capture detailed longitudinal records

of diagnoses, procedures, prescription activity and provider interactions,

enabling sustained observation of patient behavior over time1. As a result, claims data play a

foundational role in pharmaceutical analytics, health economics, outcomes

research and real‑world evidence (RWE) generation.

The growing scale and

availability of patient‑level claims data have accelerated the adoption of

advanced analytical methods aimed at predicting clinically and commercially

relevant outcomes such as medication adherence, treatment switching,

hospitalization risk and disease progression2.

Machine learning models have become central to these efforts, allowing

pharmaceutical organizations to identify complex patterns within high‑dimensional

claims datasets that are difficult to capture using traditional statistical

techniques3. In practice, such

models support critical decision‑making processes, including patient

segmentation, intervention targeting, market access planning and post‑market

effectiveness assessment.

Despite these

advances, the increasing use of sophisticated machine learning techniques has

introduced a major challenge: the

opacity of black‑box models. Many high‑performing approaches, including

ensemble methods and neural networks, provide limited insight into the

reasoning behind their predictions4,5.

In pharmaceutical and healthcare settings-where analytical outputs directly

influence patient care strategies, regulatory submissions and payer engagement-this

lack of transparency can undermine trust, hinder adoption and complicate

compliance requirements.

Explainable

artificial intelligence (XAI) has emerged as a critical response to these

concerns, aiming to make complex model behavior understandable to human

stakeholders. Techniques such as feature attribution, surrogate models and

local explanations offer mechanisms to interpret predictions without

sacrificing model performance6.

However, in many applied settings, explainability is implemented in an ad hoc

or fragmented manner, detached from broader analytics workflows, governance

standards and operational decision‑making needs.

In pharmaceutical

claims analytics, this fragmentation is particularly problematic. Existing

approaches often focus narrowly on model performance or explanation techniques

in isolation, failing to address the full lifecycle of AI deployment-from data

preprocessing and modeling to explanation generation, auditability and

regulatory defensibility. Moreover, current research rarely frames

explainability as a decision‑enabling

capability, aligned with real‑world pharmaceutical use cases such as

adherence intervention design, treatment optimization and evidence‑based

strategy development.

To address these

gaps, this study proposes a structured

explainable AI framework specifically designed for patient‑level claims data analytics

in pharmaceutical environments. The framework integrates predictive

modeling with interpretable explanation techniques and embeds governance

mechanisms that ensure transparency, traceability and compliance across the

analytics pipeline7. The contributions

of this work are threefold:

·

It introduces a unified architectural

framework combining data processing, modeling, explainability and governance;

·

It demonstrates how explainability

enhances the usability and trustworthiness of AI‑driven insights in pharmaceutical

decision‑making; and

·

It provides a scalable foundation for

responsible AI adoption in real‑world evidence and patient‑centric analytics.

2. Literature Review

2.1. Overview of explainable

AI techniques

Explainable

artificial intelligence (XAI) has emerged as a critical area of research in

response to the growing adoption of complex machine learning models whose

internal decision processes are difficult to interpret. High‑performing

algorithms-such as ensemble methods and deep neural networks-often operate as

black boxes, creating challenges in domains where decision transparency is

essential4. To address this gap,

a range of explainability techniques has been developed to provide insight into

model behavior without sacrificing predictive performance.

Among the most widely

adopted methods are Local Interpretable

Model‑Agnostic Explanations (LIME) and Shapley Additive Explanations (SHAP). LIME generates local,

instance‑level explanations by approximating the behavior of complex models

with simpler surrogate models in the vicinity of a specific prediction2. SHAP, grounded in cooperative game

theory, assigns contribution values to individual features in a manner that is

both consistent and theoretically sound6.

Other commonly used techniques include partial dependence plots (PDPs),

counterfactual explanations and rule‑based surrogate models, each offering

varying levels of interpretability, scope (local vs. global) and computational

complexity.

Collectively, these approaches

aim to bridge the gap between predictive accuracy and human‑understandable

reasoning. However, their practical value depends not only on theoretical

soundness but also on their ability to support real decision workflows-particularly

in regulated and high‑impact environments such as healthcare and

pharmaceuticals.

2.2. Explainable AI

in healthcare applications

The application of

explainable AI within healthcare has expanded rapidly across domains such as

clinical risk prediction, diagnostic support, disease prognosis and treatment

planning. In these contexts, interpretability is essential for validating model

outputs, ensuring clinical relevance and supporting trust among practitioners

and patients alike8. XAI

techniques enable stakeholders to identify the key drivers behind predictions-such

as risk factors, treatment indicators or utilization patterns-thereby

supporting more transparent and accountable decision‑making.

Recent studies

demonstrate that embedding explainability within healthcare AI systems improves

model validation, facilitates bias detection and enhances adoption among

clinicians and healthcare administrators9.

Importantly, explainability also plays a critical role in aligning AI outputs

with established clinical knowledge, helping domain experts assess whether

predictions are plausible and actionable rather than statistically opaque.

Despite this

progress, much of the existing healthcare XAI literature focuses on clinical or

imaging‑centric applications. Comparatively less attention has been given to real‑world administrative data, such

as claims, where interpretability must support not only clinicians but also

pharmaceutical decision‑makers, regulators and policy stakeholders.

2.3. Claims data

analytics and patient-level prediction

Healthcare claims

data analytics centers on extracting insight from large‑scale administrative

records that capture patient interactions with the healthcare system over time.

Claims‑based machine learning models are widely used to predict outcomes such

as medication adherence, treatment switching, hospitalization likelihood and

cost trajectories10. These

predictions are particularly valuable in pharmaceutical contexts, where

understanding patient‑level dynamics informs intervention strategies, post‑market

evaluation and real‑world evidence generation.

The high

dimensionality and longitudinal structure of claims data often necessitate the

use of advanced machine learning models capable of capturing non‑linear

relationships and temporal dependencies. While such models deliver strong

predictive performance, they frequently reduce interpretability-limiting

visibility into how patient attributes, utilization patterns and treatment

histories interact to produce specific outcomes. This trade‑off becomes

especially problematic in pharmaceutical decision‑making, where predictions

must be explainable to support regulatory submissions, payer engagement and

patient‑centric program design.

2.4. Limitations of existing

approaches

Despite the maturation

of XAI techniques, several limitations persist in their application to

healthcare claims analytics. First, many interpretability methods are applied post hoc and operate independently of

the broader analytics lifecycle. As a result, explanations may not fully

capture the internal logic of predictive models or may vary significantly

across different interpretation techniques, leading to ambiguity in decision

support5.

Second, existing

approaches often lack integration with governance and compliance mechanisms. In

regulated environments, explainability must extend beyond feature attribution

to include traceability of data inputs, model versions, assumptions and outputs

over time. Current studies frequently emphasize model accuracy and

interpretability in isolation, without addressing auditability, reproducibility

or alignment with real‑world evidence standards7.

Finally, many XAI

implementations are not designed with enterprise scalability in mind.

Computational cost, explanation instability and limited support for

longitudinal analysis can hinder adoption in real‑world pharmaceutical

analytics platforms that operate at population scale.

2.5. Research gap

synthesis

The literature

reveals a clear gap in the development of integrated explainable AI frameworks tailored to patient‑level

healthcare claims data analytics. While individual explainability techniques

such as SHAP and LIME are well established, their application remains

fragmented and insufficiently connected to governance, auditability and

decision workflows in pharmaceutical environments.

Specifically, there

is a lack of end‑to‑end frameworks that unify data preprocessing, predictive

modeling, explainability, continuous monitoring and regulatory compliance

within a single operational architecture. Addressing this gap requires moving

beyond ad hoc interpretation of black‑box models toward system‑level explainability, where transparency is embedded

throughout the analytics lifecycle and aligned with real‑world pharmaceutical

decision‑making.

This research

responds to that need by proposing a comprehensive explainable AI framework

that balances predictive performance with interpretability and governance,

supporting trustworthy, patient‑centric and enterprise‑ready claims data

analytics (Table 1).

Table 1:

Summary of Existing XAI Methods in

Healthcare.

|

Method |

Scope |

Primary Output |

Best For (Decision Use) |

Strengths |

Limitations / Cautions |

|

LIME |

Local |

Local

surrogate explanation |

Why this patient was flagged

(case review, outreach decisions) |

Model‑agnostic;

intuitive |

Can

be unstable across runs |

|

SHAP |

Global + Local |

Feature

contributions (Shapley values) |

Global drivers + patient‑level rationale

(governed insights, stakeholder trust) |

Consistent;

theoretically grounded |

Computationally

intensive |

|

PDP |

Global |

Marginal

feature effect curve |

Feature effect intuition

(policy/threshold discussions) |

Easy

to visualize |

Assumes

feature independence |

|

Counterfactuals |

Local |

Minimal changes for

different outcome |

Actionability (what changes could reduce switching

/ non‑adherence) |

Actionable;

user-friendly |

Hard for complex

constraints |

|

Rule‑based models |

Global |

Interpretable

rules |

High transparency requirements |

Highly

transparent |

Lower

predictive accuracy |

3. Theoretical

Foundation

3.1. Interpretability

vs Explainability

Interpretability and

explainability are closely related but conceptually distinct constructs in

artificial intelligence. Interpretability

refers to the inherent transparency of a model’s internal mechanics, allowing

users to directly understand how inputs are transformed into outputs. Models

such as linear regression or decision trees are often considered interpretable

because their structure and decision logic can be examined directly10. However, these models may lack the

expressive power required to capture complex, non‑linear relationships common

in high‑dimensional healthcare claims data.

Explainability, by contrast, addresses the challenge

of understanding complex or opaque models by applying external techniques that

make their behavior intelligible without exposing internal structure11. In practice, explainability enables

stakeholders to reason about predictions from high‑performing models-such as

gradient boosting machines and neural networks-using post hoc interpretation

methods. This distinction is particularly relevant in pharmaceutical analytics,

where predictive accuracy alone is insufficient unless outputs can be

explained, validated and defended across medical, regulatory and business

contexts.

For patient‑level

claims analytics, explainability therefore represents a strategic capability rather than a technical enhancement. It

enables organizations to retain advanced modeling performance while meeting the

transparency and accountability expectations required for real‑world evidence

(RWE) generation and decision support.

3.2. Trust, Fairness

and Accountability in healthcare AI

Trust is a foundational

requirement for the adoption of AI systems in healthcare and pharmaceutical

decision‑making. Unlike consumer analytics, healthcare AI influences decisions

that affect patient access, treatment pathways and population‑level outcomes.

Explainability contributes directly to trust by enabling stakeholders to assess

whether model predictions are plausible, consistent with domain knowledge and

supported by meaningful data signals8.

Fairness is a critical related dimension, particularly

in patient‑level analytics where biases embedded in historical claims data may

lead to unequal outcomes across demographic or clinical subgroups. Explainable

AI techniques support fairness assessment by revealing feature contributions

and highlighting whether sensitive attributes or proxies disproportionately

influence predictions9. Without

explainability, such biases may remain hidden, undermining both ethical

responsibility and regulatory credibility.

Accountability refers to the ability to trace, justify

and audit decisions generated by AI systems. In pharmaceutical environments,

accountability extends beyond model development to include how predictions are

used in downstream processes such as patient targeting, adherence interventions

and evidence planning. Explainability mechanisms provide the transparency

required to document why decisions were made and to support internal

governance, external review and regulatory scrutiny.

Together, trust,

fairness and accountability form a triad

of responsible AI principles that are especially relevant in claims‑based

analytics, where decisions must be both scalable and defensible.

3.3. Regulatory and governance

perspectives

The application of AI

to healthcare and pharmaceutical analytics operates within an increasingly

stringent regulatory and governance landscape. Regulatory bodies and payers

place growing emphasis on the credibility, reproducibility and transparency of

real‑world evidence used to support decision‑making. As AI models become more

deeply embedded in evidence generation and strategy formulation, the ability to

explain and audit their outputs becomes essential. Governance frameworks play a

central role in operationalizing explainability within this context. Effective

governance encompasses model documentation, version control, performance

monitoring, bias detection and traceability of data inputs and analytical

assumptions7. In patient‑level

claims analytics, governance ensures that predictions and explanations can be

reviewed, reproduced and validated over time—rather than existing as one‑off

analytical artifacts.

Importantly,

explainability strengthens governance by linking technical model behavior to documented decision logic. It

enables organizations to demonstrate not only what a model predicts, but how

those predictions were derived and whether they align with established clinical

and business reasoning. This alignment is critical for supporting regulatory

submissions, payer engagement and responsible use of AI in population‑scale

analytics.

Within this

theoretical framework, explainability is therefore not treated as an optional

model add‑on, but as a core pillar of trustworthy, enterprise‑ready AI systems

in pharmaceutical claims analytics.

4. Proposed explainable

AI framework

4.1. Framework overview

(Architecture

and Design Principles)

The proposed

explainable AI framework is designed as a modular, layered enterprise architecture that integrates patient‑level

healthcare claims data, predictive modeling, explainability mechanisms and

governance controls into a single, cohesive analytics system. The primary

objective of the framework is to ensure that predictions related to patient

behavior-such as treatment switching, medication adherence and healthcare

utilization-are not only accurate but also transparent, auditable and decision‑ready5.

Unlike ad hoc XAI

implementations that focus narrowly on model interpretation, this framework

adopts a governance‑by‑design approach,

where explainability, traceability and accountability are embedded across the

full analytics lifecycle. The architecture supports end‑to‑end workflows,

beginning with claims data ingestion and extending through prediction,

explanation, monitoring and regulatory defensibility. Each layer performs a

well‑defined function while maintaining interoperability through standardized data

flows, metadata capture and feedback mechanisms.

From an enterprise

deployment perspective, the framework is designed to operate at population scale, support longitudinal

analytics and integrate seamlessly into pharmaceutical decision‑making

environments that require reproducibility, role‑based access and auditability.

The layered design enables organizations to evolve individual components-such

as models or explanation techniques-without destabilizing the overall system.

4.2. Framework components

4.2.1. Data layer: Claims

data ingestion and preprocessing

The data layer forms the foundation of the

framework and is responsible for ingesting, standardizing and preparing patient‑level

healthcare claims data from multiple sources, including medical claims,

pharmacy claims and provider billing records3.

Given the longitudinal and heterogeneous nature of claims data, this layer

performs critical preprocessing tasks such as data cleaning, normalization,

feature extraction and temporal alignment.

Key data elements

include diagnosis codes, procedure codes, prescription histories, refill

timing, healthcare utilization metrics and demographic attributes. To support

downstream explainability and governance, the data layer also captures metadata and lineage information,

enabling traceability of how raw records are transformed into analytical

features. This foundation is essential for ensuring consistency across model

training, prediction generation and explanation outputs.

4.2.2. AI modeling layer: Predictive analytics

at patient scale

The AI modeling layer applies machine

learning algorithms to generate patient‑level predictions aligned with

pharmaceutical analytics use cases, such as medication adherence risk,

likelihood of treatment switching and hospitalization propensity. Models

including gradient boosting methods (e.g., XGBoost), random forests and neural

networks are used to capture complex, non‑linear relationships inherent in high‑dimensional

claims data12.

Model selection

within this layer balances predictive performance with explainability

compatibility and operational robustness. Importantly, models are not treated

as isolated artifacts; they are deployed within a controlled lifecycle that

includes versioning, performance monitoring and validation checkpoints. This

design supports repeatability and ensures that model outputs remain consistent

and defensible over time, especially when used in real‑world evidence

generation or strategic decision‑making.

4.2.3. Explainability layer: Structured transparency

and model insight

The explainability layer operationalizes

transparency by integrating post hoc interpretability techniques as a native

system capability rather than an external analytic step. Techniques such as

SHAP and LIME are used to generate both global

explanations (e.g., feature importance trends across populations) and local explanations (e.g., drivers of

individual patient predictions).

SHAP values quantify

the contribution of each feature to a prediction in a consistent and theoretically

grounded manner, enabling population‑level insight and cross‑model comparison6. LIME complements this by providing

localized explanations for individual predictions, supporting case‑level

reasoning and validation. Together, these methods enable stakeholders to

understand why predictions are

produced and assess whether they align with clinical knowledge, business logic

and ethical expectations.

Crucially,

explanation artifacts are treated as first‑class

outputs, stored, versioned and linked to their corresponding data inputs

and model versions. This design ensures explanations can be audited, reproduced

and reviewed—an essential requirement for enterprise adoption and regulatory

confidence.

4.2.4. Governance layer: Accountability,

Compliance and monitoring

The governance layer enforces

accountability and regulatory alignment across the analytics lifecycle. It

establishes mechanisms for audit trails, bias detection, performance tracking

and documentation of analytical assumptions. This layer ensures that every

prediction and explanation can be traced back to its underlying data, model

configuration and execution context7.

Governance functions

include continuous monitoring of model drift, validation of explanation

stability and enforcement of compliance standards related to data privacy and

real‑world evidence usage. Role‑based controls enable appropriate access to

predictions and explanations, supporting both internal governance and external

review. By embedding governance directly into the framework, explainability

becomes operational rather than aspirational-supporting trust at scale.

4.3. Feedback loop

for continuous learning and control

A key strength of the

proposed framework is its closed

feedback loop, which connects governance and performance monitoring back

to the data, modeling and explainability layers. Observed outcomes-such as

changes in patient behavior, intervention effectiveness or shifts in data

distributions-are fed back into the system to recalibrate models, refine

features and validate explanation relevance13.

This continuous

learning mechanism enables the framework to adapt to evolving healthcare

dynamics while maintaining analytical consistency and control. Importantly,

updates are governed rather than automatic: changes are logged, reviewed and

validated to ensure that adaptability does not compromise transparency or

trust. This balance between agility and governance is especially critical in

pharmaceutical environments, where both innovation and accountability are

required.

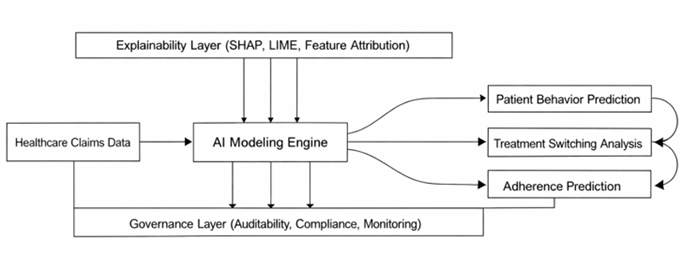

Figure 1:

Conceptual framework illustrating the

integration of healthcare claims data, AI modeling, explainability mechanisms

and governance layers for transparent patient-level analytics. The framework

highlights the flow from data ingestion to predictive outputs, including

patient behavior, treatment switching and adherence, with embedded

explainability and feedback loops for continuous model improvement.

Figure 2:

Layered architecture of the proposed

explainable AI system for healthcare claims analytics. The diagram presents the

hierarchical structure consisting of the data layer, AI modeling layer,

explainability layer and governance layer, with bidirectional data flow and

feedback mechanisms to support continuous learning, auditability and regulatory

compliance.

5. Research

Methodology

This study adopts a structured, decision‑oriented research

methodology to design and evaluate an explainable artificial

intelligence framework for patient‑level healthcare claims data analytics.

Rather than focusing exclusively on predictive accuracy, the methodology

emphasizes decision validity,

explainability quality and deployment readiness-criteria that are

essential for real‑world adoption in pharmaceutical analytics environments8.

5.1.

Data foundation and analytical context

The analysis is based

on longitudinal healthcare claims data,

integrating medical and pharmacy records that capture patient interactions

across diagnoses, prescriptions and healthcare utilization over time9. The dataset includes structured

attributes such as diagnosis codes, procedure codes, prescription histories,

refill timing and demographic characteristics, enabling end‑to‑end tracking of

patient treatment trajectories and behavioral patterns14.

From a pharmaceutical

analytics perspective, the longitudinal nature of claims data is particularly

critical because it allows for observation of temporal dynamics, including changes in adherence behavior,

treatment switching events and utilization patterns. These longitudinal

properties directly support real‑world evidence generation and patient‑centric

decision‑making rather than static, cross‑sectional analysis11.

5.2.

Feature engineering for explainability and decision relevance

Feature engineering

is performed with explicit consideration of interpretability and decision relevance, not solely predictive

power. Derived variables are constructed to represent clinically and

operationally meaningful dimensions of patient behavior and health status.

Medication adherence

is quantified using established measures such as proportion of days covered (PDC), providing interpretable

indicators of therapy compliance. Treatment switching is captured through

features reflecting the frequency, timing and sequence of regimen changes12. Clinical complexity is incorporated

using comorbidity indices derived from diagnosis codes, while healthcare

utilization is represented through inpatient admissions, outpatient visits and

pharmacy activity.

Temporal features are

engineered to capture trends and transitions in patient behavior over time,

allowing models to reflect evolving risk rather than static snapshots5. This feature design ensures that

downstream explanations can be meaningfully interpreted by domain experts and

aligned with pharmaceutical decision workflows.

5.3.

Predictive modeling with explainability compatibility

The predictive

modeling process involves the application and comparison of multiple machine learning algorithms

capable of handling high‑dimensional, structured claims data. Models evaluated

include gradient boosting (e.g., XGBoost), random forest classifiers and neural

network architectures, each selected for their ability to capture complex non‑linear

relationships12.

Model selection

criteria extend beyond performance metrics to include stability, explainability compatibility and operational robustness.

Gradient boosting models, in particular, are emphasized due to their strong

performance in structured data settings and compatibility with post‑hoc

explanation techniques. Models are trained, validated and versioned in a

controlled manner to support reproducibility and lifecycle management-both

critical for enterprise deployment.

5.4.

Explainability integration and evaluation

Explainability is

embedded directly into the analytical pipeline using post‑hoc interpretability techniques rather than being applied as

an external or ad hoc step. SHAP is employed to generate both global and local

explanations by quantifying the contribution of individual features to model

predictions in a consistent and theoretically grounded manner6,7.

Complementarily, LIME

is applied to produce localized, instance‑level explanations by approximating

model behavior in the vicinity of individual predictions2. Together, these techniques support

explanation at both population and patient levels-an essential requirement for

pharmaceutical analytics where decisions must be justified across cohorts as

well as individual cases.

Explainability

outputs are evaluated not only for interpretability, but also for:

·

Stability

across model runs and datasets.

·

Consistency

between explanation methods.

·

Plausibility

relative to clinical and domain knowledge.

This evaluation

ensures that explanations are reliable and actionable rather than purely

descriptive.

5.5.

Evaluation framework: Beyond model accuracy

The framework is

evaluated using a multi‑dimensional

assessment strategy that reflects real‑world pharmaceutical decision

requirements. Predictive performance is measured using standard classification

metrics, including accuracy, precision, recall and area under the receiver

operating characteristic curve. However, these metrics are treated as necessary

but insufficient conditions for deployment.

To assess decision validity, interpretability

evaluation focuses on whether explanations:

·

Identify clinically meaningful drivers

of behavior.

·

Remain consistent across patient

subgroups.

·

Support defensible intervention planning.

Trustworthiness is

evaluated through transparency, reproducibility and alignment with established

medical and pharmaceutical knowledge1.

This approach ensures that model outputs and explanations are suitable for

consumption by non‑technical stakeholders involved in patient programs,

evidence planning and strategic decision‑making.

5.6.

Enterprise deployment readiness

Beyond analytical

performance, the methodology explicitly considers enterprise deployment readiness. This includes assessing

computational scalability of explanation techniques, reproducibility of results

and compatibility with governance and compliance requirements.

Explainability

artifacts are treated as first‑class outputs-stored, versioned and linked to

specific data inputs and model configurations. Continuous monitoring mechanisms

are assumed to track model performance, explanation stability and drift over

time, supporting responsible deployment in dynamic healthcare environments

(Ahmad et al., 2018).

By aligning technical

evaluation with governance, trust and operational scalability, the methodology

ensures that the proposed framework is suitable not only for experimental

validation but also for real‑world pharmaceutical analytics platforms (Table

2).

Table 2: Dataset

Variables and Features.

|

Domain |

Feature Group |

Variable |

Description |

Data Type |

|

Demographic |

Patient Profile |

Age |

Patient age |

Numerical |

|

Demographic |

Patient Profile |

Gender |

Patient gender |

Categorical |

|

Clinical |

Clinical Condition |

Diagnosis Codes |

Disease classification (ICD) |

Categorical |

|

Treatment |

Therapy History |

Medication History |

Prescription records over time |

Sequential |

|

Behavioral |

Treatment Behavior |

Adherence Rate |

Proportion of days covered (PDC) |

Numerical |

|

Behavioral |

Treatment Behavior |

Switching Indicator |

Change in treatment regimen |

Binary |

|

Utilization |

Healthcare Utilization |

Hospital Visits |

Number of inpatient admissions |

Numerical |

|

Utilization |

Healthcare Utilization |

Outpatient Claims |

Frequency of outpatient services |

Numerical |

6. Experimental

Results and Analysis

6.1. Predictive performance as decision enablement

The predictive models

were evaluated using standard classification metrics, including accuracy,

precision, recall and area under the receiver operating characteristic curve

(AUC). While these metrics provide an essential baseline for assessing statistical

performance, they are interpreted here primarily in terms of decision enablement rather than

algorithmic comparison alone.

Among the evaluated

models, gradient boosting (XGBoost) demonstrated the strongest overall

performance, exhibiting high accuracy and robustness in predicting patient‑level

outcomes such as medication adherence and treatment switching. Neural network

models achieved comparable performance but required significantly higher

computational resources, while random forest models offered a balanced trade‑off

between performance stability and interpretability. Logistic regression,

although more transparent by design, exhibited lower predictive strength,

limiting its suitability for complex claims‑based decision scenarios.

From a pharmaceutical

analytics perspective, these findings indicate that high‑performing models are necessary but not sufficient. The

selected models must not only predict outcomes reliably, but also support

downstream explainability and governance requirements. Gradient boosting models

were therefore favored, as they provided strong predictive capability while

remaining compatible with explainability techniques essential for responsible

deployment.

6.2. Explainability outputs as decision

insights

Explainability analysis

played a central role in transforming model outputs into actionable decision insights. SHAP‑based

global explanations revealed a consistent set of high‑impact drivers across

models, including prior medication adherence, refill gaps, comorbidity burden

and healthcare utilization frequency. These features align closely with

established clinical and pharmaceutical understanding of patient behavior,

reinforcing confidence in the model’s reasoning.

At the population

level, explainability outputs enabled stakeholders to understand which factors systematically influence

outcomes, supporting cohort‑based planning and evidence development. At

the patient level, local explanations generated through LIME highlighted how

individual characteristics contributed to specific predictions, enabling case‑level

assessment and validation.

Rather than serving

as descriptive artifacts, explainability outputs were evaluated for decision relevance—specifically,

whether they:

·

Identified modifiable drivers suitable

for intervention.

·

Remained consistent across patient

subgroups.

·

Aligned with domain knowledge and real‑world

evidence expectations.

This evaluation

confirmed that explainability techniques meaningfully enhanced trust in model

outputs and supported their use in pharmaceutical decision workflows.

6.3. Scenario‑driven case analysis

6.3.1.

Treatment switching prediction as early warning

In treatment

switching prediction scenarios, the framework effectively identified patients

at elevated risk based on declining adherence patterns, widening refill gaps,

increasing comorbidity complexity and higher healthcare utilization.

Explainability outputs consistently showed that refill gap dynamics and recent

prescription instability were among the strongest contributors to switching

risk.

From a decision‑making

standpoint, these insights enable earlier

identification of at‑risk patients, allowing pharmaceutical teams to

intervene proactively-through patient support programs or evidence‑based

engagement strategies-rather than reacting after switching occurs. Importantly,

explanation transparency allowed stakeholders to distinguish between clinically

driven switching and behaviorally driven patterns, supporting more targeted

responses.

6.3.2.

Medication adherence risk stratification

In adherence

prediction scenarios, the model demonstrated high sensitivity in identifying

patients likely to become non‑adherent. SHAP analysis indicated that historical

adherence measures, medication complexity and demographic context were the

dominant contributors to risk.

These explainable

insights enable decision‑makers to move beyond binary risk flags toward interpretable risk stratification,

where interventions can be tailored based on why adherence risk is elevated. For example, interventions for

patients driven by regimen complexity may differ from those driven by access or

utilization instability. This differentiation is critical for designing

scalable, patient‑centric adherence strategies.

6.4.

Explainability versus black‑box performance trade‑offs

A comparison with non‑explainable

(“black‑box”) model deployments demonstrated that the integration of

explainability techniques did not

result in meaningful degradation of predictive performance. Instead,

explainability substantially improved transparency, validation capability and

stakeholder confidence without compromising accuracy6.

This finding

reinforces a key premise of the proposed framework: explainability and performance are not competing objectives in

well‑designed pharmaceutical analytics systems. When explainability is embedded

into the modeling and governance lifecycle, it strengthens decision quality

rather than constraining model capability.

6.5.

Implications for real‑world deployment

Beyond numerical

evaluation, the experimental analysis highlights how explainable AI frameworks

unlock practical value for real‑world pharmaceutical deployment. By linking

model predictions to interpretable drivers, the framework supports:

·

Auditable decision‑making aligned with

regulatory expectations

·

Cross‑functional adoption across

analytics, medical and strategy teams

·

Continuous monitoring and refinement of

models based on observed outcomes

These results

demonstrate that explainability transforms claims‑based analytics from

predictive outputs into defensible,

actionable decision intelligence, capable of operating at enterprise

scale (Tables 3 and 4).

Table 3:

Model Performance Comparison.

|

Model |

Accuracy |

Precision |

Recall |

AUC |

|

XGBoost |

0.89 |

0.87 |

0.85 |

0.92 |

|

Random Forest |

0.86 |

0.84 |

0.82 |

0.89 |

|

Neural Network |

0.88 |

0.86 |

0.84 |

0.91 |

|

Logistic Regression |

0.81 |

0.79 |

0.77 |

0.85 |

Table 4:

Explainability Output Summary.

|

Feature |

Relative Importance |

Effect on Outcome |

Decision Insight

(Why It Matters) |

|

Adherence History |

High |

Positive |

Past adherence

strongly predicts future adherence behavior and should anchor patient risk

stratification. |

|

Refill Gap |

High |

Negative |

Larger refill gaps

signal elevated non‑adherence risk and represent a modifiable intervention

point. |

|

Comorbidity Index |

Medium |

Positive |

Higher clinical

burden increases likelihood of treatment instability and switching. |

|

Age |

Medium |

Mixed |

Impact varies by

subgroup, indicating need for cohort‑specific interpretation rather than

global rules. |

|

Hospital Visits |

Low |

Positive |

Frequent inpatient

activity reflects underlying instability but is a secondary risk driver. |

Figure 3: Feature

Importance / SHAP Visualization.

(Figure 3)

SHAP based feature importance visualization illustrating the contribution of

key variables to model predictions in patient-level claims analytics. The chart

highlights the relative impact of factors such as adherence history, refill

gaps, comorbidity index, age and healthcare utilization on predictive outcomes.

Higher SHAP values indicate greater influence on the model’s decision, enabling

transparent interpretation of the drivers behind patient behavior predictions,

including treatment switching and medication adherence.

7.

Discussion

The findings of

this study highlight the practical value of integrating explainable AI into

patient-level claims data analytics11. From a pharmaceutical decision-making

perspective, the ability to interpret model predictions enhances strategic

planning, particularly in areas such as patient targeting, adherence

improvement programs and treatment optimization. Explainability enables

stakeholders to identify the underlying drivers of patient behavior, thereby

supporting more informed and evidence-based decisions2.

The incorporation

of explainability techniques significantly strengthens the role of real-world

evidence in healthcare analytics. By providing transparent insights into

predictive models, the framework improves the credibility and usability of

AI-driven outputs. This is particularly important in regulated environments,

where decision-making must be supported by interpretable and auditable

evidence.

However, the

study also highlights the inherent trade-off between predictive accuracy and

interpretability. While complex models such as gradient boosting and neural

networks offer higher accuracy, they require additional layers of explanation

to ensure transparency. Simpler models, although more interpretable, may not

capture the full complexity of claims data. The proposed framework addresses

this trade-off by combining high-performance models with robust explainability

techniques.

From a deployment

perspective, integrating explainable AI into pharmaceutical analytics systems

requires careful consideration of infrastructure, scalability and user

interface design14 Organizations must ensure that

explanations are not only accurate but also accessible and actionable for

decision-makers1.

Additionally,

continuous monitoring and updating of models are necessary to maintain

performance and relevance over time.

8.

Limitations

Despite its

contributions, this study has several limitations that merit consideration.

First, the quality and completeness of healthcare claims data can significantly

influence both predictive accuracy and explanation reliability. Coding

inconsistencies, missing data and variability across healthcare systems may

affect generalizability and require dataset‑specific adaptation.

Second, while

explainability techniques such as SHAP and LIME provide valuable insight, they

can introduce computational overhead, particularly in large‑scale, real‑time

environments. Enterprise deployments must therefore balance explanation

granularity with performance and cost considerations.

Finally organizational

readiness remains a non‑technical constraint. Effective adoption of explainable

AI requires stakeholder education, alignment across analytics and decision

teams and trust in both model outputs and explanations. These factors are

critical for translating technical capability into operational impact.

9.

Conclusion

This study presents a

comprehensive explainable artificial intelligence framework for patient‑level

healthcare claims data analytics with direct relevance to pharmaceutical

decision‑making. As machine learning models increasingly influence real‑world

evidence generation, patient targeting strategies and treatment optimization

efforts, the need for transparency, interpretability and accountability has

become critical.

The proposed

framework addresses this need by integrating high‑performance predictive

modeling with robust explainability techniques and embedded governance

mechanisms within a unified, scalable architecture. Results demonstrate that

advanced models such as gradient boosting and neural networks can be

effectively combined with post‑hoc interpretation methods, including SHAP and

LIME, without materially compromising predictive performance. More importantly,

the explainability layer enables stakeholders to understand the drivers of

model predictions, supporting informed, defensible and patient‑centric decision‑making.

By embedding

auditability, traceability and monitoring into the analytics lifecycle, the

framework aligns AI‑driven insights with regulatory expectations and real‑world

evidence standards. The inclusion of feedback loops further enables continuous

learning, allowing the system to adapt to evolving patient behavior and

healthcare dynamics while maintaining consistency and reliability.

Overall, this work

advances the practical application of explainable AI in healthcare claims

analytics by demonstrating that interpretability is not a trade‑off against

performance, but a prerequisite for responsible, enterprise‑scale deployment.

Future research may extend this framework through causal inference, privacy‑preserving

learning and federated architectures to further strengthen trust, robustness

and scalability in pharmaceutical analytics environments.

10. References

11. Molnar

C. Interpretable machine learning.

Lulu. Com, 2020.