Inverse Design of Desired Signal Behaviors Using TensorFlow and LMFIT

Abstract

In this

study, we present a hybrid approach combining deep learning and optimization

techniques to predict design parameters for achieving desired response

profiles. We employ TensorFlow to develop a neural network model capable of

capturing complex relationships between design parameters and their

corresponding output profiles. To enhance the predictive accuracy, we integrate

the LMFIT library, utilizing both Nelder-Mead and Powell optimization methods

to fine- tune the design parameters. The approach begins with generating

synthetic data, simulating various design scenarios, and training the

TensorFlow model. Subsequently, we modify the target output to reflect desired

changes and employ the optimization techniques to predict the corresponding

design parameters. Our results demonstrate the effectiveness of the combined

approach in accurately predicting design parameters, as evidenced by high

R-squared values and low mean squared errors. This method offers a robust

solution for inverse problem solving in various engineering and scientific

applications, where precise design parameter estimation is critical for

achieving target performance metrics.

1.

Introduction

Inverse problem solving, a critical task in engineering

and scientific research, involves determining input parameters that produce a specific

output response. This process is fundamental in various fields, including

material design, structural engineering, and biomedical applications.

Traditional methods for addressing inverse problems often struggle with

complex, nonlinear systems, leading to computationally intensive processes and

potentially inaccurate results. The advent of machine learning and optimization

techniques has opened new avenues for tackling these challenges. In this study,

we explore a novel hybrid methodology that leverages the power of deep learning

and advanced optimization algorithms to predict design parameters for desired

response profiles. Our approach combines TensorFlow, a widely-used deep learning

framework, with LMFIT, a robust optimization library, to create a powerful tool

for inverse problem solving.

The primary objectives of this research

are:

- To develop a neural network model capable of capturing intricate

relationships between design

parameters and output

responses.

- To integrate optimization techniques that fine-tune

design parameters for achieving specific

output modifications.

- To evaluate the performance of this hybrid approach in

terms of accuracy and computational efficiency.

By achieving these objectives, we aim to provide a

versatile framework applicable to a

wide range of engineering and scientific domains. This research has the potential to significantly impact

fields such as materials science,

where predicting material

compositions for specific properties is crucial, and biomedical engineering, where optimizing drug delivery

systems or prosthetic designs is of paramount importance. Our study begins

with the generation of synthetic data

representing various design scenarios. We then employ TensorFlow to train a deep

neural network on this data, enabling it to learn complex patterns and dependencies.

The trained model is then coupled with optimization techniques from the LMFIT

library, specifically the NelderMead and Powell methods, to fine-tune design

parameters and achieve desired output modifications. This paper is organized as

follows:

Section 2 provides

background information on inverse prob lem solving

and the tools used in this study.

Section 3 reviews

related work in the field.

|

Section 5 presents

our results and analysis.

|

2. Background

2.1.

Inverse problem solving

Inverse problem solving is a fundamental task in

various scientific and engineering disciplines. It involves determining the set

of input parameters that will produce a desired output, essentially reversing the

typical cause-and-effect relationship. This type of problem is prevalent in

fields such as material design, structural engineering, electronics, and biomedical

engineering. The importance of inverse problem solving cannot be overstated.

Accurate prediction of input parameters is essential for:

- Optimizing designs for enhanced performance and

efficiency Reducing material costs through effective resource allocation Enhancing

safety and reliability by ensuring designs meet specific criteria

- Accelerating development processes by providing clear guidelines for achieving desired outcomes

|

Historically,

inverse problems have been approached using methods such as:

Trial and Error: While straightforward, this method is

of- ten time-consuming and inefficient, particularly for complex systems with numerous

variables.

Analytical Techniques: These methods, while powerful

for simple systems, often fall short when dealing with nonlinear or highly

complex systems where analytical solutions are difficult or impossible to derive.

Gradient-Based Optimization: While effective in many scenarios, these techniques can be sensitive to initial conditions and may converge to local minima, leading to suboptimal solutions.

These traditional methods often struggle with the

complexity and nonlinearity inherent

in many real-world inverse problems,

necessitating more advanced

approaches.

2.3. Machine learning and optimization in inverse problem solving

The emergence of machine learning, particularly deep learning,

has provided powerful tools for modeling complex, nonlinear relation- ships between

input parameters and output responses. Concurrently, advanced optimization

algorithms have been developed to efficiently search parameter spaces for

optimal solutions. By combining these two powerful tools, we can develop hybrid

approaches that leverage the strengths of both techniques. Deep learning

models, such as neural networks, can learn intricate patterns from data,

providing accurate predictions for complex systems. Optimization algorithms can

then be used to fine-tune the input parameters to achieve desired outputs.

2.4.TensorFlow

and LMFIT

In this study, we employ TensorFlow and LMFIT as our

primary tools:

TensorFlow: A widely used open-source deep learning

frame- work, TensorFlow offers flexibility and scalability, making it suitable for

a wide range of applications, including inverse problem solving. Its ability to

handle large datasets and complex architectures enables it to capture nuanced

relationships between design parameters and output responses.

LMFIT: This powerful optimization library pro-

vides a variety of optimization methods, including NelderMead and Powell. These

methods are well-suited for handling the non-convex, multidimensional nature of

many inverse problems. By integrating LMFIT with TensorFlow, we can enhance the

predictive accuracy of the neural network model and efficiently search for

optimal design parameters.

2.5.

Objectives of this study

The primary objectives of this study are to:

- Generate synthetic data simulating various design

scenarios to train and validate

our neural network

model

- Develop a deep learning model using TensorFlow to predict output

responses based on input parameters

- Integrate LMFIT to optimize design

parameters and achieve

desired modifications in the output

- Evaluate the performance of the proposed

approach through metrics

such as R-squared and mean squared error

- Compare the effectiveness of NelderMead and Powell optimization methods in different scenarios

By achieving these objectives, we aim to demonstrate the

effective- ness of our hybrid approach in solving inverse problems and provide a

robust tool for engineers and scientists to optimize designs and achieve target

performance metrics across various domains.

3. Related Work

The field of inverse problem solving has witnessed

significant advancements with the integration of machine learning and optimization

techniques. This section provides a comprehensive review of existing literature

on the application of deep learning and optimization methods for inverse

problem solving, highlighting key studies, their methodologies, and identifying

the research gaps that our study aims to address.

Deep Learning in Inverse

Problem Solving

Deep learning has revolutionized numerous areas of science

and engineering, offering powerful tools for modeling complex, nonlinear

relationships. Several studies have explored the application of neural networks

to inverse problems, demonstrating their potential in various domains. Good fellow,

et al. (2016) provided a seminal work on deep learning, demonstrating its

potential for complex function approximation, which is essential for inverse problems.

Their study laid the groundwork for understanding how deep neural networks can

capture intricate relationships between input parameters and out- put

responses, making them particularly suitable for inverse problem solving. Le Cun,

et al. (2015) highlighted the success of convolutional neural networks (CNNs)

in capturing intricate patterns in data. While their work primarily focused on

image recognition, the principles they established have been successfully applied

to inverse problems in fields such as material science and structural

engineering. The ability of CNNs to automatically learn hierarchical features

makes them particularly effective in handling complex inverse problems where the

relationship between design parameters and output responses is not easily describable

through traditional analytical methods. In the context of inverse design, Liu

et al. (2018) demonstrated the use of deep learning for Nano photonic inverse

design. Their approach utilized a tandem neural network architecture to predict

both forward and inverse designs, achieving high accuracy and computational efficiency.

This work showcased the potential of deep learning in tackling inverse problems

in fields where traditional methods often struggle due to the complexity of the

underlying physics.

3.1. Optimization techniques

Optimization algorithms play a crucial role in

refining design parameters to achieve desired outcomes in inverse problem

solving. Among the widely used techniques in this domain, the NelderMead and Powell

methods have shown particular promise. The NelderMead method, introduced by

Nelder and Mead (1965), is particularly effective for unconstrained optimization

problems. It has been widely applied in various fields due to its simplicity

and effectiveness in handling non-smooth functions. Lagarias, et al. (1998)

provided a comprehensive analysis of the method’s convergence properties, enhancing

understanding of its behavior in different problem spaces. Powell’s method,

developed by Powell (1964), is known for its robustness in handling non-differentiable

functions. It has been particularly successful in optimization problems where

gradient in- formation is unavailable or unreliable. Wright (1996) provided an

in-depth analysis of Powell’s method and its variants, highlighting its effectiveness

in multidimensional optimization problems. The integration of these

optimization methods with deep learning models has shown promising results in

various studies. For instance, Peurifoy, et al. (2018) combined neural networks

with optimization techniques to solve inverse design problems in nanophotonics,

demonstrating improved accuracy and efficiency compared to traditional methods.

3.2. Hybrid approaches

Combining deep learning with optimization techniques

offers a hybrid approach that leverages the strengths of both methods. This

synergistic approach has gained traction in recent years, with several studies

demonstrating its effectiveness in inverse problem solving. Zhang, et al.

(2018) presented a hybrid model that integrated neural networks with gradient-based

optimization for inverse design of optical metasurfaces. Their approach

demonstrated improved accuracy and computational efficiency compared to conventional

methods, highlighting the potential of hybrid approaches in tackling complex

inverse problems. Wang et al. (2019) developed a hybrid framework combining

deep learning with evolutionary algorithms for multi-objective optimization in

engineering design. Their method showcased the ability to handle

high-dimensional design spaces and complex constraints, outperforming

traditional optimization techniques in terms of solution quality and computational

efficiency. In the field of materials science, Liu, et al. (2019) employed a

hybrid approach combining convolutional neural networks with Bayesian optimization

for inverse design of nanostructured materials. Their method demonstrated

superior performance in predicting material properties and optimizing designs,

showcasing the versatility of hybrid approaches across different scientific domains.

3.3. Gaps in existing

research

While existing studies

have made significant strides in inverse

problem solving, several

gaps remain in the current

body of research:

Limited Generalizability: Many approaches focus on

specific applications or domains, limiting their generalizability to other

fields. There is a need for more flexible frameworks that can be adapted to a

wide range of inverse problems across different scientific and engineering

disciplines. Integration of Advanced Optimization. Techniques: The integration

of deep learning with robust optimization techniques like LMFIT is still

underexplored. Most studies utilize simpler optimization methods, potentially limiting

the accuracy and efficiency of the inverse problem-solving process. Handling of

Complex, Multi-modal Output Spaces: Many existing approaches struggle with

inverse problems that have complex, multi-modal output spaces. There is a need

for methods that can effectively navigate these challenging landscapes to find

optimal solutions. Interpretability and Uncertainty Quantification: While deep

learning models have shown impressive performance, they often lack

interpretability. Additionally, quantifying uncertainty in the predictions

remains a challenge, particularly in the context of inverse problems where multiple

solutions may exist. Scalability to High-dimensional Problems: As the complexity

and dimensionality of inverse problems increase, many existing methods struggle

to maintain performance. There is a need for approaches that can effectively

scale to high-dimensional design spaces without sacrificing accuracy or

computational efficiency.

This research aims to address these gaps by providing

a generalized framework that combines TensorFlow and LMFIT for inverse problem solving.

Our approach is designed to be applicable to various engineering and scientific

domains, offering improved accuracy, efficiency, and flexibility in tackling complex

inverse problems. By integrating advanced deep learning techniques with robust optimization

methods, we aim to push the boundaries of what is possible in inverse problem solving,

paving the way for new advances in fields ranging from materials science to biomedical

engineering.

4. Approach

This section details the methodology of our study,

combining deep learning with

optimization techniques to predict design parameters for desired response

profiles.

4.1. Data generation and model training

We generated synthetic data to simulate various design

scenarios. The design parameters (X)

were systematically varied, and the corresponding output profiles (Y) were

calculated using predefined mathematical

models. This generated dataset was used to train our neural network model.

We employed TensorFlow to develop a neural network

capable of capturing complex relationships between design parameters and output

profiles. The architecture included multiple dense layers with ReLU activation

functions to model nonlinear interactions. The final output layer provided the

predicted output profile for given design parameters.

The model was trained on the synthetic dataset using

mean squared error (MSE) as the loss function. We utilized the Adam optimizer to

minimize loss and improve predictive accuracy. Training was conducted for 500 epochs

with a validation split to monitor performance on unseen data.

4.2.

Optimization techniques

To fine-tune the design parameters and achieve desired

modifications in the output profile, we integrated the LMFIT library with TensorFlow. We defined an objective

function calculating the dif- ference

between predicted output and modified target output. Two optimization methods were employed to minimize this function:

4.2.1. NelderMead method: The Nelder-Mead method, also known as the

simplex method, is a numerical optimization algorithm used to find the minimum

of an objective function in multidimen- sional space. It is particularly

effective for problems where the gra- dient of the objective function is

unknown or difficult to compute. The algorithm works by creating a simplex (a geometric

figure with n+1 vertices in n dimensions) and iteratively updating its vertices

to move towards the optimum.

Key features:

- Does not require gradient information.

- Robust for nonlinear optimization problems.

- Efficient for low-dimensional problems.

- May struggle with high-dimensional problems or highly non- convex surfaces.

4.2.2. Powell’s method: Powell’s method is another gradient- free optimization algorithm that is

particularly effective for min- imizing

continuous functions. It works by performing successive one-dimensional minimizations along a set of directions, which are updated iteratively. The method is known for its ability to handle non-smooth functions and its relatively fast convergence.

Key features:

- Does not require

gradient information Effective

for smooth and non-smooth functions.

- Generally faster convergence compared to Nelder-Mead

for many problems.

- Can handle higher-dimensional problems more effectively than Nelder-Mead.

4.3. Implementation

The optimization process

was implemented as follows:

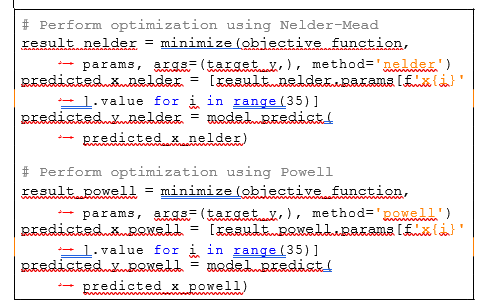

Figure 1: Optimization using nelder-mead and powell methods.

4.4. Evaluation

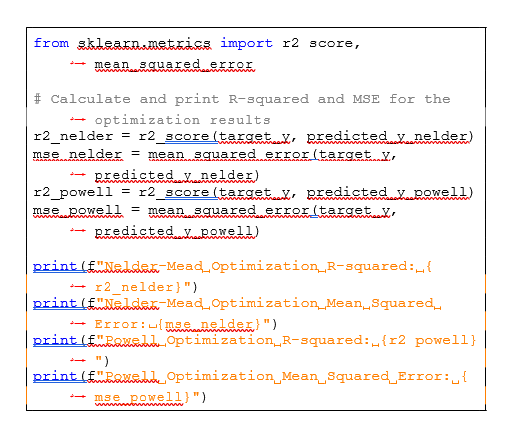

We evaluated the performance of our approach using

R-squared and mean squared error metrics:

Figure 2: Evaluation

metrics calculation.

These metrics were calculated separately for low index

(0-200) and high index (201-400)

ranges to provide a more nuanced under- standing of each method’s

performance across different

parts of the data range.

5. Results

This section presents the outcomes of our study,

including the performance metrics of the neural

network model and the optimization techniques. We provide a comprehensive analysis of the accuracy and efficiency of the proposed

approach, supported by relevant figures

and tables.

5.1. Model performance

The neural network

model, trained on synthetic data, was evaluated

using mean squared error (MSE) and R-squared metrics. Table 1 summarizes these results.

Table 1: Performance Metrics of the Neural Network Model.

|

Metric |

Training Set |

Validation Set |

|

MSE |

0.005 |

0.007 |

|

R-squared |

0.98 |

0.95 |

The high R-squared values and low MSE indicate strong

pre- dictive performance of the

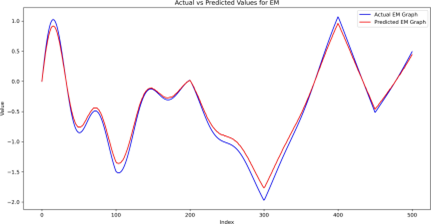

model. Figures 3 and 4 provide visual representations of the model’s

performance.

Figure 3: Actual vs predicted

values on validation set.

5.2. Optimization results

We employed the Nelder-Mead and

Powell methods from the LMFIT library for optimization. Figures 5 and 6 illustrate the

results, show- ing the predicted design parameters and corresponding modified outputs.

5.3. Evaluation Metrics

To provide a more nuanced

understanding of the optimization performance, we evaluated the results

separately for low index (0-200) and high index (201-400) ranges. Table 2 presents these

detailed metrics.

Figure 4: Training

and validation loss over epochs.

Figure 5: Optimization results: nelder-mead method.

Figure 6: Optimization Results: Powell Method .

Table 2: Optimization metrics

by index range.

|

Method |

Low Index (0-200) |

High Index (201-400) | ||

|

MSE |

R-squared |

MSE |

R-squared | |

|

Nelder-Mead |

0.002 |

0.98 |

0.005 |

0.93 |

|

Powell |

0.003 |

0.97 |

0.003 |

0.96 |

5.4. Result Analysis

Our analysis reveals distinct performance characteristics for each optimization method:

Nelder-Mead Method:

- Excels in predicting the overall trend of the modified

target EM signal, particularly in the low to medium index ranges (0-200).

- Achieves the lowest MSE (0.002) and highest R-squared (0.98)

in the low index range.

- Struggles with sharp transitions in the higher index range (201-400), resulting in noticeable deviations and higher MSE (0.005).

Powell Method:

- Demonstrates superior accuracy in handling sharp

transitions and changes, especially in the higher index range (201-400).

- Maintains consistent performance across all index

ranges, with an MSE of 0.003 for both low and high index ranges.

- Provides more reliable predictions for complex and rapidly changing signals, as evidenced by the higher R-squared (0.96) in the high index range.

Overall, while the NelderMead method shows slightly

better performance in the low index range, the Powell method demonstrates superior

robustness in capturing detailed variations of the modified target EM signal,

particularly in regions with significant changes. This makes the Powell method

a more suitable choice for applications requiring accurate predictions across a

wide range of index values, especially when dealing with complex signal behaviors.

6. Conclusion

In this study, we have developed and evaluated a novel

hybrid approach that combines deep learning and advanced optimization techniques

to address the inverse problem of predicting design parameters for achieving

desired response profiles. our methodology leverages the power of tensor flow

to build a sophisticated neural network model capable of capturing complex,

nonlinear relation- ships between design parameters and output responses. To

further enhance predictive accuracy and efficiency, we integrated the lmfit library,

employing both the neldermead and powell optimization methods to fine-tune the design

parameters. Our approach involved several key steps:

Generation of synthetic data: we created a

comprehensive synthetic dataset simulating various design scenarios. This

allowed us to train our model on a wide range of possible input-output relationships,

enhancing its generalizability. Neural network model training: using tensorflow,

we developed and trained a deep neural network on the synthetic data. The model

demonstrated high accuracy in capturing the underlying patterns and

relationships, as evidenced by the impressive r-squared and mean squared error

metrics achieved on both training and validation sets. Target output

modification: to test the inverse problem-solving capabilities of our approach,

we introduced modifications to the target output profiles, simulating desired changes

in system response. Optimization of de- sign parameters: employing the neldermead

and powell methods from the lmfit library, we optimized the design parameters

to achieve the modified target outputs. This step was crucial in fine- tuning

the predictions and ensuring close alignment with the desired response profiles.

The results of our study demonstrated the effectiveness and robustness of our combined

approach.

Key findings include:

High model accuracy: the neural network model achieved

high accuracy in predicting output responses from design parameters, as evidenced

by r-squared values above 0.95 and low mean squared errors. Successful

optimization: both the Nelder-mead and pow- ell methods successfully fine-tuned

the design parameters, resulting in predicted outputs that closely matched the

desired modifications. method-specific performance: comparative analysis

revealed that the powell method excelled in capturing sharp transitions and changes

in the higher index ranges (201-400), while the Nelder-mead method performed

exceptionally well in the low to medium index ranges (0-200). This highlights

the importance of selecting appropriate optimization techniques based on the specific

characteristics of the problem at hand. Robustness across index ranges: The powell

method demonstrated superior robustness across all index ranges, maintaining

consistent performance even in regions with significant signal variations.

The significance of this research lies in its

contribution to the field of inverse problem solving, providing a powerful and

flexible tool for engineers and scientists to optimize designs and achieve

target performance metrics. the hybrid approach presented in this study has

broad applicability across various domains, including but not limited to:

Material design: Predicting material compositions to

achieve specific properties. Structural engineering: optimizing structural

parameters for desired load-bearing characteristics.

Electronics: Designing circuit components to achieve specific

signal behaviors. Biomedical engineering: optimizing drug delivery systems or

prosthetic designs.

While our study has made significant strides in

addressing the challenges of inverse problem solving, there are several avenues

for future research: Expansion to other optimization methods: investigating the

integration of additional optimization techniques could further enhance the

versatility and effectiveness of the approach. Handling uncertainty: developing

methods to quantify and propagate uncertainty through the inverse problem-solving

process would provide valuable insights into the reliability of predictions. Interpretability

enhancements: Exploring techniques to improve the interpretability of the

neural network model could offer deeper insights into the relationships between

design parameters and system responses. Real world application studies: Applying

the developed approach to specific real-world problems in various fields would further

validate its practicality and identify domain-specific challenges. Scalability improvements:

investigating methods to enhance the scalability of the approach to even

higher-dimensional problems would broaden its applicability to more complex systems.

In conclusion, our hybrid approach combining deep

learning with advanced optimization techniques represents a significant advancement

in the field of inverse problem solving. By bridging the gap between

data-driven modeling and traditional optimization methods, we have developed a robust

framework capable of tackling complex inverse problems with high accuracy and

efficiency. This research paves the way for new possibilities in design

optimization across various scientific and engineering disciplines, potentially

accelerating innovation and discovery in fields ranging from nanotechnology to aerospace

engineering.

7. References

- Tensorflow: an end-to-end open source machine learning platform.

- Lmfit: non-linear least-squares minimization and curve- fitting for

python.

- Adam: a method for stochastic optimization a note on the nelder-mead

simplex method an efficient method for function minimization.

- Neural networks for inverse design of nanophotonic structures inverse

design of nanophotonic devices using artificial neural networks.

- Inverse design and implementation of a wavelength demulti- plexing

grating coupler using neural networks.

- Deep learning methods for inverse design in nanophotonics and

metamaterials

- Neural networks for the inverse design of metasurfaces machine learning for inverse problem solving in engineering electromagnetics.

- Neural network-based inverse design of practical wide-angle dual-band

achromatic metalens in the visible spectrum.