Unmasking Bias: A Framework for Testing and Mitigating AI Bias in Insurance Underwriting Models

Abstract

The integration of

Artificial Intelligence (AI) in insurance underwriting has catalyzed a paradigm

shift towards hyper-personalized risk assessment and operational optimization.

However, the proliferation of AI-powered models in this space introduces a latent

risk - algorithmic bias - which, if unaddressed, can perpetuate systemic

inequities, exposing insurers to compliance violations, reputational risks and

ethical dilemmas. This research delves into the critical need for rigorous bias

testing frameworks within AI-driven underwriting models, advocating for a

multi-faceted approach that incorporates fairness metrics, data sanitization

and transparency enhancing mechanisms. Leveraging advanced explainable AI (XAI)

techniques and fairness-centric model architectures, we propose a comprehensive

bias detection and mitigation strategy that spans the entirety of the AI

lifecycle, from pre-processing through to post-deployment monitoring. By

embedding continuous calibration loops and real-time fairness monitoring, this

paper posits that insurers can not only mitigate the risk of algorithmic

discrimination but also foster a future of equitable, compliant and transparent

underwriting systems. Through a confluence of machine learning fairness

strategies, regulatory adherence protocols and ethical AI practices, this work

lays the foundation for a transformative shift towards trustworthy AI in the

insurance domain.

Keywords: AI-driven Underwriting,

Algorithmic Bias, Fairness in AI, Bias Testing Frameworks, Explainable AI

(XAI), Ethical AI, AI Bias Mitigation, Equitable Underwriting, Bias Detection

Algorithms,

1. Introduction

The

convergence of Artificial Intelligence (AI) with the insurance underwriting

process marks a pivotal transformation in the industry, heralding an era

characterized by data-driven decision-making and hyper-personalized risk

profiling. Leveraging sophisticated machine learning (ML) algorithms, insurers

now harness vast amounts of structured and unstructured data to generate highly

accurate predictions, optimize pricing strategies and enhance operational

efficiencies. AI-driven underwriting models promise not only unprecedented

levels of precision but also the agility to dynamically adjust to fluctuating

market conditions, customer behaviors and evolving risk landscapes. However,

the rapid deployment of AI in underwriting introduces a latent yet formidable

challenge-algorithmic bias-which threatens the integrity, fairness and equity

of automated decision-making systems.

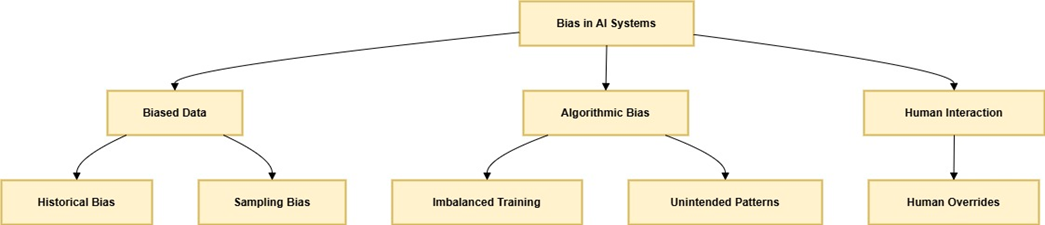

Bias

in AI arises from a multitude of sources, including biased training data,

inadvertent algorithmic assumptions and hidden feature correlations, all of

which can distort predictions and lead to discriminatory outcomes. In the

context of insurance, such biases manifest as unfair risk assessments, where

certain demographic groups—often marginalized or underrepresented—may be

subjected to higher premiums, denied coverage or receive suboptimal policy

conditions based on non-relevant or unjust factors. As AI models rely heavily

on historical datasets that may perpetuate these biases, even the most advanced

machine learning systems are vulnerable to replicating societal inequities. The

result is not only a violation of ethical principles but also a legal minefield,

as discriminatory practices in underwriting violate numerous

anti-discrimination laws such as the Equal Credit Opportunity Act (ECOA) and

other regulations that prohibit biases based on race, gender, ethnicity and

other protected characteristics.

Given

these risks, there is an urgent need for bias testing and mitigation in

AI-driven underwriting systems to ensure that these technologies serve their

intended purpose - empowering insurers to make fair, transparent and compliant

decisions. Bias detection is not a monolithic task but rather a multifaceted

challenge that spans the entire lifecycle of AI model development—from the

initial stages of data collection and preprocessing to the subsequent phases of

model training, deployment and post-deployment monitoring. Addressing this

challenge necessitates the integration of fairness metrics such as demographic

parity, equal opportunity and individual fairness into the core fabric of AI

model design, fostering a more comprehensive and equitable model development

pipeline.

Furthermore,

the opacity inherent in many AI models, particularly in deep learning-based

systems, exacerbates the difficulty of detecting and addressing bias. These

so-called “black box” models offer little transparency in terms of how

decisions are made, rendering it challenging to ascertain the exact reasons for

bias or discriminatory behavior. Consequently, the adoption of Explainable AI

(XAI) techniques becomes indispensable, enabling stakeholders-from data

scientists and business leaders to regulatory bodies and end-users—to gain

insight into the decision-making processes of these models. Model

interpretability not only aids in identifying potential biases but also ensures

accountability and trustworthiness in AI-driven decision-making.

In

this paper, we propose a comprehensive bias testing framework designed to

detect, assess and mitigate biases within AI-based underwriting systems in the

insurance industry. Our framework incorporates automated fairness audits,

adversarial debiasing algorithms and data sanitization techniques to identify

biased patterns and ensure that models adhere to both legal and ethical

standards. Additionally, we emphasize the importance of ongoing post-deployment

monitoring, using real-time fairness dashboards and continuous model

recalibration strategies to mitigate any drift or emerging biases that may arise

once models are operationalized. The integration of such rigorous testing

practices not only aligns with regulatory mandates but also reinforces

insurers’ commitments to equitable business practices, customer trust and

long-term sustainability.

By

leveraging cutting-edge AI fairness strategies, this paper aims to bridge the

gap between technological innovation and social responsibility in the insurance

sector. Through the implementation of a robust bias testing and mitigation

framework, insurers can ensure that their AI-powered underwriting models

deliver outcomes that are not only accurate but also fair, transparent and in

line with broader societal values.

2. Challenges

of Bias in AI-Driven Underwriting

The

incorporation of Artificial Intelligence (AI) into insurance underwriting has

undeniably revolutionized the industry's operational landscape. However, the

underlying challenges posed by algorithmic bias threaten to undermine the

efficiency, fairness and transparency that these innovations promise. Below, we

explore the multi-dimensional challenges of bias in AI-driven underwriting

systems, delving into the technical, ethical and operational complexities.

2.1 Data Quality and

Representational Bias

AI

models are fundamentally data-driven and their efficacy is intrinsically tied

to the quality, diversity and representativeness of the data they are trained

on. Unfortunately, real-world datasets often carry the imprint of historical

inequities and systemic biases. In the context of underwriting, this translates

to training data that may disproportionately underrepresent minority

populations or encode discriminatory patterns reflective of past decisions.

Sampling

bias can

lead to disparate impact, where

models systematically disadvantage certain demographic groups.

Feature

engineering bias

arises when proxy variables in the dataset inadvertently correlate with

sensitive attributes like race, gender or socioeconomic status.

Historical

bias

perpetuates systemic inequities, even when a model is technically accurate, by

reflecting discriminatory practices embedded in historical decision-making

processes.

Addressing

these biases requires advanced data preprocessing techniques such as

re-sampling, re-weighting and synthetic data generation to ensure that training

datasets are both diverse and balanced.

2.2 Model Design and Hidden

Bias Propagation

AI

models, particularly those utilizing deep learning architectures, are often

susceptible to hidden bias propagation during training. These models

automatically identify patterns and relationships within data, but without

explicit fairness constraints, they may amplify or even create new forms of

bias.

Complexity

in feature interaction: High-dimensional feature spaces in AI models make it challenging

to interpret which features are driving predictions, increasing the risk of

latent bias.

Algorithmic

opacity:

Many AI models, particularly those employing ensemble methods or neural

networks, function as “black boxes,” limiting visibility into the

decision-making process. This lack of interpretability complicates the

detection of biased behavior and impedes stakeholder trust.

Bias

amplification loops: Feedback loops created by biased model predictions can lead to

compounding inequities, especially in iterative systems where predictions

influence future datasets.

To

mitigate these issues, the adoption of fairness-aware machine learning

techniques and model explainability tools is critical.

2.3 Regulatory and Ethical

Constraints

AI-driven

underwriting systems operate in a highly regulated domain, with stringent laws

and guidelines designed to protect consumers from discrimination. However,

compliance with these regulations-such as the Fair Housing Act (FHA) or the

Equal Credit Opportunity Act (ECOA)-is increasingly complex when using AI

models.

Regulatory

ambiguity:

Many existing regulations were not designed with AI in mind, leading to

uncertainty about how compliance should be interpreted and enforced in

algorithmic contexts.

Unintended

discrimination:

Even when direct discrimination is avoided, indirect discrimination, where

seemingly neutral variables disproportionately affect protected groups, can

result in significant legal repercussions.

Ethical

considerations:

Beyond legal compliance, insurers face mounting pressure to adhere to ethical

AI principles, such as transparency, accountability and fairness, which require

proactive measures to identify and eliminate bias.

Navigating

these challenges necessitates the integration of fairness audits, bias-testing

frameworks and cross-functional collaborations involving data scientists, legal

teams and ethicists.

2.4 Post-Deployment

Challenges and Bias Drift

Bias

does not cease to be a concern once an AI model is deployed. Bias drift or the

emergence of biases over time due to shifts in the underlying data

distribution, poses a significant challenge to the long-term reliability of

AI-driven underwriting systems.

Dynamic

risk profiles:

Changes in market conditions, demographics or societal norms can render

initially fair models biased over time.

Adversarial

exploitation:

Malicious actors may attempt to exploit algorithmic vulnerabilities,

introducing biases that were not present during initial training.

Monitoring

and recalibration: Continuous monitoring and recalibration are essential to ensure

that models remain aligned with fairness objectives, but these processes often

require substantial computational and operational resources.

To

combat these issues, insurers must deploy real-time fairness monitoring

systems, incorporating automated bias detection algorithms and self-healing

models capable of recalibrating their decision-making processes autonomously.

2.5 Business and Operational

Impacts

The

presence of bias in AI-driven underwriting systems can have far-reaching

implications for insurers, affecting not only their compliance posture but also

their business outcomes and reputational standing.

Customer

trust erosion:

Discriminatory practices, whether real or perceived, can lead to public

backlash, damaging an insurer’s brand and eroding customer trust.

Operational

inefficiencies:

Biased models may lead to suboptimal decision-making, resulting in financial

losses due to mispriced policies or increased claims ratios.

Competitive disadvantage: As the industry moves toward ethical AI adoption, insurers failing to address bias risk being left behind, both in terms of technological capability and customer appeal.

By

embedding bias-mitigation strategies into their AI pipelines, insurers can not

only protect themselves from potential liabilities but also position themselves

as leaders in the ethical application of AI technology.

3. Bias

Testing Framework for AI-Driven Insurance Underwriting

To ensure fairness, transparency

and accountability in AI-driven insurance underwriting, a robust bias testing

framework must address the lifecycle stages of AI systems. By integrating

real-world examples, this section demonstrates the practical implementation of

bias detection, evaluation and mitigation.

3.1 Data Preprocessing and

Bias Detection

Challenge: An insurer trains an AI

model to predict policyholder risk using historical data, but the data

disproportionately represent urban male policyholders. This underrepresentation

of rural and female applicants leads to biased predictions.

Framework Implementation:

Example: The training dataset includes demographic features like location (urban/rural) and gender. Analysis reveals a skewed distribution, with 80% urban male applicants.

Solution: Apply oversampling

techniques to balance the data by adding synthetic rural and female applicant

records using SMOTE (Synthetic Minority Over-sampling Technique).

Use Aequitas, a fairness assessment

tool, to calculate metrics like the disparate impact ratio, ensuring balanced

representation across demographics.

By addressing these imbalances, the

model is less likely to favor urban male applicants in its predictions.

3.2 Bias-Aware Model

Training

Challenge: A neural network underwriting model uses income as a feature. However, income correlates strongly with gender in the dataset, leading to lower approval rates for female applicants.

Framework

Implementation:

Example: During training, the

insurer applies adversarial debiasing, where a secondary model tries to predict

gender from the underwriting model’s predictions.

Outcome: If

the adversarial model succeeds in identifying gender, the primary model is

penalized, forcing it to make predictions independent of gender.

Use Explainable AI tools to ensure

that the model’s decisions are driven by neutral factors like credit score or

claim history, not proxy variables for gender.

This ensures the final model treats

applicants equitably, regardless of gender.

3.3 Bias Evaluation Metrics

Challenge: After training, the model shows a 10% higher rejection rate for applicants from minority ethnic groups compared to the majority group.

Framework

Implementation:

Example: Calculate

fairness metrics like:

Equal

Opportunity Difference: Measures whether qualified minority

applicants have the same approval rates as majority applicants.

Counterfactual

Fairness: Test whether changing an applicant’s ethnicity while keeping other

features constant changes the approval decision.

Results indicate a disparity in approval rates caused by historical bias in the dataset.

This evaluation allows insurers to

pinpoint and address systemic biases, ensuring fair outcomes.

3.4 Deployment with

Real-Time Bias Monitoring

Challenge: Post-deployment, a drift in the data distribution causes the model to reject a higher proportion of rural applicants over time.

Framework

Implementation:

Example: Implement

real-time fairness monitoring dashboards using tools

Detect concept drift, where the

proportion of rural applicants in the data shifts from 20% to 30%, creating new

biases in predictions.

Deploy automated alerts that trigger retraining of the model with updated data to recalibrate fairness.

This proactive approach prevents

bias from accumulating and ensures continuous fairness in underwriting

decisions.

3.5 Bias Mitigation

Strategies

Challenge: A

model trained to predict premium pricing systematically assigns higher premiums

to older applicants, even when risk factors are identical to younger

applicants.

Framework

Implementation:

Example: Apply

post-hoc mitigation by adjusting premium thresholds using recalibration

techniques.

Recalculate premiums using a

fairness-aware algorithm that equalizes pricing for applicants with similar

risk profiles, regardless of age.

Implement latent variable disentanglement to remove age-related biases from the model’s intermediate computations.

This ensures that age is only

considered where directly relevant to risk assessment, maintaining equitable

pricing.

3.6 Regulatory and Ethical

Compliance

Challenge: An

insurer faces regulatory scrutiny under the Equal Credit Opportunity Act (ECOA)

after a model disproportionately denies coverage to applicants from

lower-income regions.

Framework Implementation:

Example: Conduct

a bias audit comparing the model’s outcomes against regulatory fairness

standards.

Partner with external auditors to

validate that the model adheres to ethical AI principles like transparency and

accountability.

Use a fairness checklist aligned with ECOA to ensure compliance.

The audit not only ensures legal

compliance but also builds public trust in the insurer’s underwriting

practices.

3.7 Stakeholder

Collaboration

Challenge: End-users (insurance agents) report that the model frequently denies coverage without clear explanations, leading to dissatisfaction and lack of trust.

Framework

Implementation:

Example: Facilitate

collaboration between data scientists and agents by integrating explainable AI

(XAI) modules into the underwriting system.

Agents can access explanations for

each decision, such as “The denial was based on low credit score and high claim

history risk.”

Incorporate feedback loops where agents highlight questionable decisions, allowing data scientists to refine the model.

This approach ensures that the

underwriting system aligns with both technical objectives and real-world needs.

4. Case

Study

Addressing Bias in AI-Driven Life Insurance Underwriting

Background

A leading life insurance provider implemented an AI-driven underwriting system to automate and enhance its policy approval process. The system utilized machine learning algorithms to assess applicant risk based on demographic, medical and financial data. While the solution reduced processing time and operational costs, an internal audit revealed evidence of bias in policy decisions. Certain demographic groups experienced higher rejection rates or less favorable premium pricing, raising ethical, regulatory and reputational concerns.

Challenge

The insurer faced the following challenges related to bias in its AI-driven underwriting system:

Data Bias: Historical data used

for training reflected societal inequalities, such as underrepresentation of

certain ethnic groups.

Algorithmic

Bias:

The machine learning model disproportionately weighed specific attributes

(e.g., zip codes or income levels) that indirectly correlated with race and

socioeconomic status.

Regulatory

Risks:

Bias in the decision-making process posed compliance risks under

anti-discrimination laws.

Reputational Damage: Reports of unfair treatment could erode trust among customers and stakeholders.

Intervention

Using the Bias Testing Framework

To address these challenges, the insurer adopted the Bias Testing Framework described in this paper. The steps included:

Bias

Identification:

Conducted exploratory data analysis (EDA) to identify skewed distributions in

the training dataset, focusing on demographic variables like age, gender and

ethnicity.

Leveraged Explainable AI (XAI)

tools to examine feature importance and identify disproportionately impactful

variables.

Used fairness metrics such as demographic parity and equal opportunity to measure bias

in the model's decisions.

Bias

Mitigation:

Deployed re-sampling techniques to

balance underrepresented groups in the training data.

Implemented algorithmic debiasing methods, such as

adversarial debiasing, to adjust the model's decision boundaries.

Introduced constraints during model

training to enforce fairness without compromising accuracy.

Bias

Monitoring:

Established a real-time monitoring system to track bias metrics in production,

flagging anomalies for human review.

Conducted periodic fairness audits

to ensure compliance with evolving regulatory standards and societal norms.

Stakeholder

Collaboration:

Engaged with regulators to validate bias mitigation practices.

Conducted focus groups with policyholders to understand their concerns and perspectives.

Outcome

The insurer achieved the following

outcomes through the intervention:

Improved

Fairness:

The updated AI model demonstrated a significant reduction in bias-related

disparities across demographic groups.

Regulatory Compliance: The bias testing and mitigation framework ensured adherence to anti-discrimination laws, reducing regulatory exposure.

Enhanced

Trust:

Transparent communication of the steps taken to address bias strengthened

customer and stakeholder confidence in the company.

Sustained

Performance:

Despite fairness constraints, the AI system maintained high accuracy and

efficiency, achieving the dual goals of ethical and operational excellence.

Lessons

Learned

Proactive

Bias Management:

Early identification and correction of biases prevent downstream ethical and

reputational risks.

Continuous

Monitoring:

Bias in AI models is not static; ongoing evaluation and adaptation are

necessary.

Multi-Stakeholder

Involvement:

Collaboration with regulators, customers and internal teams ensures

comprehensive and sustainable solutions.

Conclusion

This case study illustrates the

practical application of the Bias Testing Framework to real-world insurance

underwriting challenges. By prioritizing fairness alongside efficiency, the

insurer not only mitigated bias but also set a benchmark for ethical AI

practices in the insurance industry.

Future

Directions

The future directions for bias

testing in AI-driven insurance underwriting models present significant

opportunities to enhance fairness, transparency and ethical accountability in

the industry. As AI technologies become increasingly integrated into

decision-making processes within insurance, addressing and mitigating biases in

these systems will be essential for fostering trust and ensuring equitable outcomes

for all stakeholders. This section explores emerging trends and potential

avenues for advancing bias detection and correction in AI models, highlighting

key areas of development such as the integration of Explainable AI (XAI),

adaptive mitigation techniques and the establishment of standardized fairness

metrics. Additionally, the future of bias testing extends beyond technical

solutions to encompass ethical, societal and regulatory considerations, paving

the way for a more inclusive and responsible application of AI in insurance.

Integration

of Explainable AI (XAI): Future research could focus on using XAI to improve transparency and help insurers understand how biases

are introduced into AI underwriting decisions.

Adaptive

Bias Mitigation: Developing adaptive

bias correction techniques that evolve with new data, ensuring

real-time bias detection and correction in underwriting models.

Multi-Dimensional

Fairness Metrics: Expanding fairness metrics to consider various factors like

economic and social outcomes, providing a more comprehensive approach to

fairness in AI models.

Collaboration

with Regulators: Working with regulatory

bodies to establish standardized bias

testing guidelines for AI systems in insurance, ensuring ethical

and compliant use.

Cross-Domain

Bias Detection:

Examining how biases from other industries (e.g., credit scoring, healthcare)

affect AI-driven insurance models and addressing these issues with

cross-disciplinary insights.

Long-Term

Impact Analysis: Studying the long-term

effects of bias mitigation on business outcomes like customer

satisfaction, trust and financial performance.

AI and

Human Collaboration: Combining AI and

human expertise to detect complex biases that AI alone may not

identify, ensuring a more holistic approach to fairness.

Real-Time

Bias Detection:

Implementing real-time bias

detection systems in live underwriting environments to

automatically flag and correct biased decisions as they happen.

Ethical

and Societal Implications: Investigating the broader ethical and societal implications of AI bias mitigation,

ensuring fairness while addressing historical inequalities.

Multi-Stakeholder

Involvement:

Involving multiple stakeholders (e.g.,

customers, policymakers) in the bias testing process to develop more inclusive

and diverse fairness frameworks.

5.

Conclusion

In conclusion, the integration of

AI-driven models in insurance underwriting heralds a transformative shift in

the industry, offering unparalleled efficiency and personalization. However,

this advancement is fraught with the potential for inherent biases that could

undermine fairness and perpetuate systemic inequities. This paper has

illuminated the critical need for robust bias testing frameworks that can

systematically identify, measure and mitigate biases embedded within AI

systems. Through a comprehensive exploration of strategies such as Explainable

AI (XAI), adaptive bias correction mechanisms and the development of

multi-dimensional fairness metrics, we have outlined a path toward more

transparent, accountable and ethically sound AI systems in insurance.

Furthermore, the collaboration

between technologists, regulators and stakeholders is essential in ensuring

that AI models are not only optimized for performance but are also designed to

foster social equity and trust. By embracing the future directions highlighted

in this paper - ranging from real-time bias detection to cross-domain bias

analysis - insurance companies can lead the charge in establishing

industry-wide standards for fairness and inclusivity. Ultimately, the evolution

of bias testing in AI-driven underwriting will not only enhance the operational

integrity of insurance platforms but will also serve as a cornerstone for a

more responsible, customer-centric approach in the ever-evolving landscape of

AI-powered decision-making.

6.

References

- Drew Roselli, Jeanna Matthews and Nisha Talagala. Managing Bias in

AI. In Companion Proceedings of the 2019 World Wide Web Conference, San

Francisco, CA USA, May 2019 (WWW '19 Companion), 2019;10.

- McKinsey Global Institute

“Artificial Intelligence: The Next Digital Frontier?”, 2017.

- Kahn J. “Artificial

Intelligence Has Some Explaining to Do”. Bloomberg Businessweek, 2018.

- Dastin J. “Amazon scraps secret AI

recruiting tool that showed bias against women”. Reuters Business News, 2018.

- Goodman B and Flaxman S. “European

Union regulations on algorithmic decision-making and a 'right to explanation'”.

ICML Workshop on Human Interpretability in Machine Learning, New York, NY,

2016.

- Buolamwini J.

“Aritificial Intelligence Has a Problem with Gender and Racial Bias”. TIME,

2019.

- Ribeiro MT, Singh S and Guestrin C. “’Why Should I Trust you?’: Explaining the Predictions of Any Classifier”. Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining. San Francisco, CA, 2016.